Intro to Web Proxies

Modern web and mobile apps spend most of their time communicating with back-end services — sending data, receiving responses, and then rendering or processing that data on the client (browser, mobile app, etc.). Because so much logic now lives on servers, testing and securing those back-end endpoints is a major focus of web application penetration testing. To inspect and manipulate the traffic between clients and servers we use web proxies.

What is a web proxy?

A web proxy is a tool placed between an application (browser or mobile app) and the server it talks to. It captures every HTTP(S) request and response, effectively acting as a man-in-the-middle (MITM) for web traffic. Unlike general-purpose packet capture tools (e.g., Wireshark), which examine all network packets, web proxies focus on web protocols (commonly HTTP on port 80 and HTTPS on port 443) and present the traffic in a human-friendly way.

Web proxies are indispensable for web pentesting because they let you:

- See all HTTP requests a client issues and the server responses.

- Pause (intercept) requests to edit headers, body, or parameters before forwarding them.

- Replay or resend requests with modifications to test server behavior.

Why proxies are essential for pentesters

Compared to older command-line techniques, modern web proxies make capturing, modifying, and replaying requests much faster and less error-prone. They let you perform targeted manipulations and observe server reactions in real time — an essential skill for finding and proving vulnerabilities.

Common uses of web proxies

Beyond simple request capture/replay, web proxies support many workflows useful to both offensive and defensive testers:

- Automated and manual vulnerability scanning

- Fuzzing web inputs and endpoints

- Crawling the application to build a site map and discover endpoints

- Mapping application behavior and parameter usage

- Deep request/response analysis (headers, JSON, cookies, tokens)

- Testing web configuration and security headers

- Assisting manual code review by showing runtime traffic

Tools we’ll cover

In this module we’ll focus on the two most widely used free/paid web proxies in the pentesting community:

- Burp Suite — feature-rich, industry standard (community and professional editions).

- OWASP ZAP (Zed Attack Proxy) — open-source, extensible, and widely used for automated scanning and manual testing.

Note: This module will concentrate on how to operate web proxies and which proxy features match common web testing tasks. Specific classes of web attacks (XSS, SQLi, SSRF, etc.) are covered in dedicated web modules and will be referenced where relevant.

Intercepting Web Requests

Intercepting, Modifying, and Forwarding HTTP Requests

Once your proxy is running and your browser/app is configured to use it, you can capture and tamper with the HTTP traffic the application sends. The basic workflow is:

- Enable interception

- Turn on the proxy’s intercept feature (Burp: Proxy → Intercept → Intercept is on; ZAP: Break or set breakpoints).

- Trigger the action in the application (click a button, submit a form) so the request is sent from the client to the proxy.

- Inspect the request

- The request will pause in the proxy UI. Look at the request line, headers, cookies, query string, and body (JSON, form data, multipart).

- Pay attention to

Content-Type,Content-Length, auth headers (Authorization, cookies, CSRF tokens), and any custom headers.

- Modify safely

- Edit what you need: headers, parameter values, JSON fields, or even the HTTP method.

- If you change the body size, update or let the proxy recalculate

Content-Length. Many proxies handle this automatically; if not, you must update it manually. - For JSON changes, keep correct syntax (quotes, commas). Breaking syntax will usually cause the server to reject the request or return a parse error — use this to your advantage when testing input handling, but expect errors.

- Forward, drop, or respond

- Forward (Forward / Action → Forward): send the modified request to the server and watch the response.

- Drop / Cancel: stop the request from reaching the server if you want to test client-only behavior or prevent dangerous actions.

- Respond with custom data: some proxies let you craft a fake response locally (useful to test client-side handling without touching the server).

- Replay and iterate

- Use Repeater (Burp) or Manual Request Editor (ZAP) to resend modified versions repeatedly without re-triggering the app. This is ideal for fine-grained fuzzing or confirming an exploit.

- Use Compare / History to track differences between original and modified responses.

- Handle authentication and state

- Keep CSRF tokens, session cookies, OAuth tokens in mind — modifying or reusing stale tokens will often fail.

- If your edits break authentication (e.g., change user ID), expect the server to reject access or return a 401/403.

- Use shortcuts & helpers

- Match-and-replace / breakpoint rules: auto-intercept only requests that match patterns (path, method, header). Saves time when the app sends many background requests.

- Format/Beautify: pretty-print JSON or URL-encoded bodies for easier editing.

- Extensions/Plugins: use community add-ons for automated tampering (e.g., param mangling, encoding tricks).

- Watch for HTTPS gotchas

- If HTTPS is in use, ensure the proxy’s CA certificate is installed on the client. Otherwise you’ll see certificate warnings or the client will block connections.

- Mobile apps may implement certificate pinning — use app hooking or emulator settings to bypass pinning during tests.

- Log everything & be cautious

- Save the original request and modified variants in your project history (evidence).

- Don’t perform destructive actions on production systems without authorization. Confirm scope and get written permission.

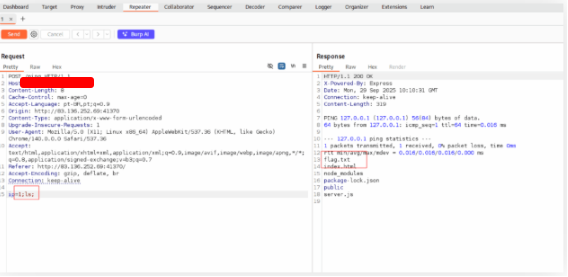

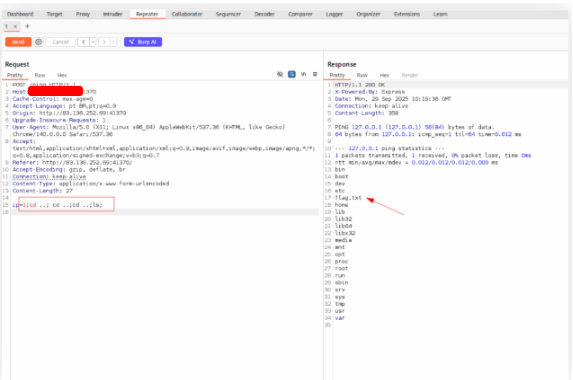

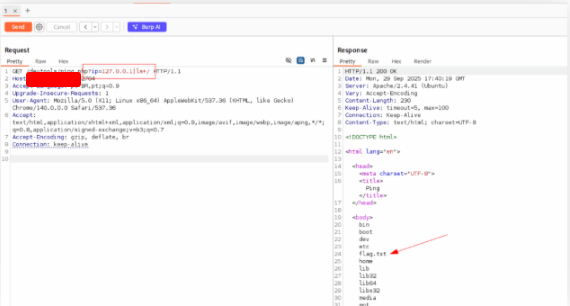

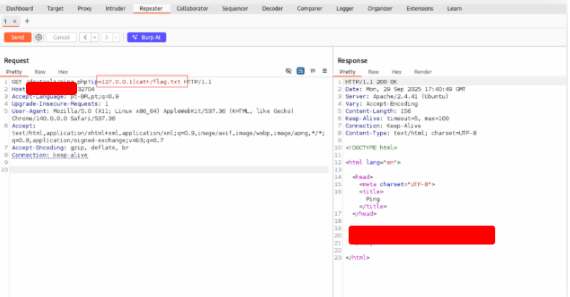

Try intercepting the ping request on the server shown above, and change the post data similarly to what we did in this section. Change the command to read ‘flag.txt’

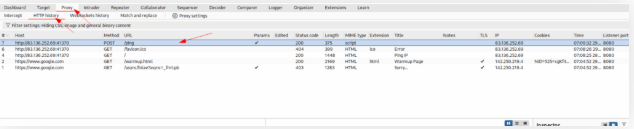

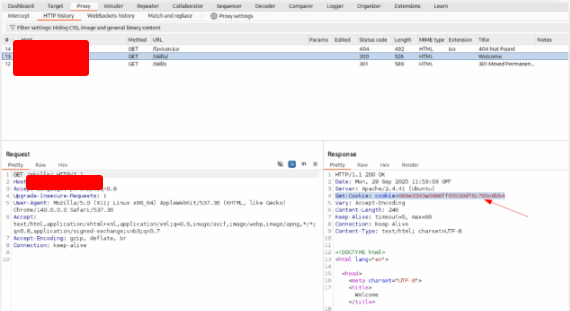

Open Burp Suite and the page of the exercise.

Insert any valeu in the field and click on the Ping button. Go to Burp Suite and click on Proxy and HTTP History.

Right click on the request and choose Send to Repeater. On the Repeater tab, use ls command to check the files in the current directory.

Now that we know the file’s name. Use command cat to get the flag.

Repeating Requests

Earlier we bypassed the input validation and used a non-numeric value to trigger a command injection on the remote server. To run another command the manual way, we’d have to intercept the request again, replace the payload with the new command, forward the request, and then check the browser for the output.

Doing that cycle — intercept, edit, forward, verify — for every single command quickly becomes tedious. Each execution can take five or six steps, and repeated dozens or hundreds of times this wastes a lot of time.

That’s where request repeating comes in. With this feature you can resend any request that has already passed through the proxy. You can quickly tweak the request (for example, change the injected command) and resend it from within the proxy tool, then review the server’s response in the proxy UI — all without re-intercepting the traffic from the client. This makes iterative testing (enumeration, fuzzing, payload tuning) much faster and far less error-prone.

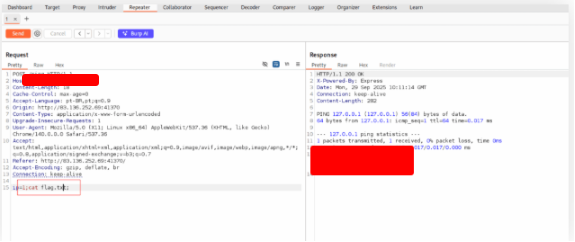

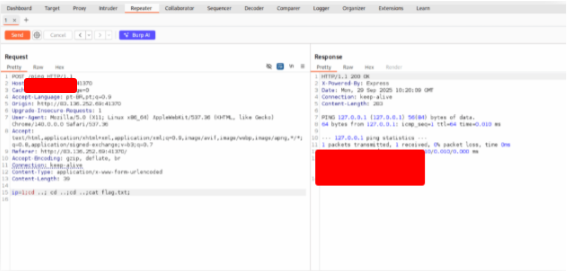

Try using request repeating to be able to quickly test commands. With that, try looking for the other flag.

Go to Burp. We already have the request. Just go back on some directories to find the flag.

Encoding/Decoding

When you edit and resend HTTP requests, you’ll often need to encode or decode data so the server accepts and correctly interprets your input. If request bodies, query strings, or headers contain special characters and aren’t encoded properly, the server may error, mis-parse the request, or behave in unexpected ways. Good tooling (Burp/ZAP) gives you quick encoders/decoders so you don’t have to do this by hand.

URL encoding (percent-encoding) — the essentials

URL encoding replaces unsafe or special characters with % followed by two hex digits. Common gotchas:

- Space: in a URL path use

%20. Inapplication/x-www-form-urlencoded(typical HTML form data / query strings) a space is often encoded as+— be mindful which context you’re in. - & : separates query parameters; if your parameter value contains

&it must be encoded (%26) or it will be treated as a new parameter. - # : indicates a fragment; if present in a value it must be encoded (

%23) or the remainder will be treated as a fragment and never sent to the server.

There are variants (full URL-encoding, Unicode/UTF-8 percent-encoding) useful when data contains non-ASCII characters — make sure you pick the encoding appropriate for the request component (path vs query vs form body).

Other encodings you’ll commonly need

Web apps frequently use other encodings, so be ready to decode/encode:

- HTML escape (e.g.,

<,>) - Unicode / UTF-8 (percent-encode multibyte chars)

- Base64 (common for cookies, tokens, POST bodies)

- ASCII hex (hex-encoded blobs)

Both Burp and ZAP provide built-in encoders/decoders for these formats.

Tool quick-reference

Burp Suite

- Repeater / any selected text → right-click:

Convert Selection → URL → URL encode key characters. - Shortcut for URL encode in Repeater: Ctrl+U (when text selected).

- Full encoder/decoder: Decoder tab (paste text → choose encode/decode type).

- Can enable “URL-encode as you type” in some fields (auto-encodes characters while editing).

OWASP ZAP

- ZAP generally URL-encodes form/query data automatically when sending requests.

- Open encoder/decoder: Ctrl+E (Encoder/Decoder/Hash dialog) — paste text and pick the operation.

Practical tips & pitfalls

- Context matters: Path vs query vs form body differ (use

%20vs+appropriately). - Content-Length: If you change body length, let the proxy recalculate

Content-Lengthor update it yourself. - Double-encoding: Avoid encoding twice (e.g.,

%25is encoded%), unless the server expects it. - Token freshness: Encoding won’t fix stale CSRF/session tokens — refresh them before replaying requests.

- When to encode: If you paste raw JSON or special chars in Repeater and the server returns a parse error, try proper encoding.

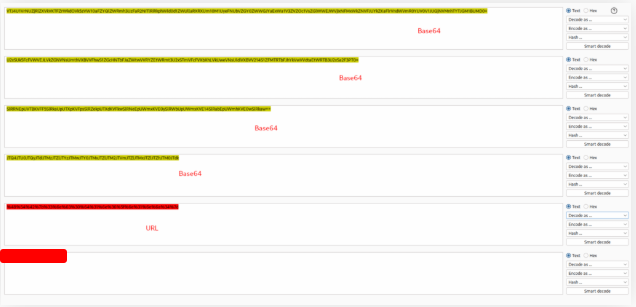

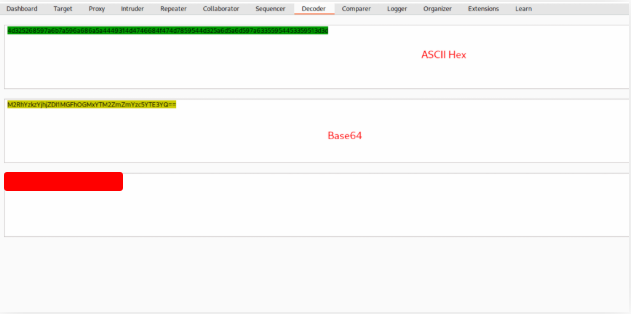

The string found in the attached file has been encoded several times with various encoders. Try to use the decoding tools we discussed to decode it and get the flag.

Download the file and copy the content of the file on the Decoder Burp Tab. After that, just decode in this order.

Proxying tools

A key part of working with web proxies is the ability to capture and inspect the HTTP(S) traffic produced by command-line utilities and full-featured desktop (thick) clients. Intercepting this traffic exposes the exact requests and responses those programs exchange with servers, which helps you understand their behavior, spot hidden endpoints, view custom headers or tokens, and apply the same proxy capabilities you rely on when testing browsers — such as intercepting, modifying, replaying, and logging requests.

To make a specific tool send all of its web requests through your proxy, you must configure the tool to use the proxy endpoint (for example, http://127.0.0.1:8080), just like you would for a browser. How you configure that proxy differs between tools: some provide command-line flags (for instance --proxy), others respect environment variables (like HTTP_PROXY / HTTPS_PROXY), and some require editing config files or adding OS-level proxy settings. Because each application exposes proxy settings differently, you’ll often need to check the tool’s documentation or experiment to find the correct place to insert the proxy address.

There are a few practical gotchas and tips to keep in mind when proxying non-browser tools: many CLI programs don’t validate certificates the same way browsers do, so you may need to import or trust your proxy’s CA certificate to avoid TLS failures; some apps use certificate pinning or embed custom TLS stacks that bypass system proxies; and services that use native APIs (e.g., Windows HTTP APIs) may require OS-level proxy configuration rather than per-app settings. Additionally, tools that open multiple simultaneous connections or use HTTP/2 may present more complex traffic patterns in the proxy, so learning how to filter and group requests will make analysis easier.

This section presents concrete examples showing how to route and intercept traffic from command-line and desktop clients using popular proxy tools. The procedures and features are essentially the same whether you use Burp Suite or OWASP ZAP, so examples will focus on the configuration differences you’ll encounter in common tools and on practical troubleshooting steps (certificate installation, environment variables, and dealing with pinned TLS).

Try running ‘auxiliary/scanner/http/http_put’ in Metasploit on any website, while routing the traffic through Burp. Once you view the requests sent, what is the last line in the request?

[*] Auxiliary module execution started - auxiliary/scanner/http/http_put

[*] Running against: example.com:80

[*] Sending HTTP PUT request to http://example.com/msf_test.txt

---- RAW HTTP REQUEST (as seen in Burp) ----

PUT /msf_test.txt HTTP/1.1

Host: example.com

User-Agent: Mozilla/5.0 (compatible; MSF)

Accept: */*

Connection: Close

Content-Type: application/octet-stream

Content-Length: 13

[REDACTED]

---- END REQUEST ----

[+] example.com:80 - PUT succeeded (HTTP/1.1 201 Created)

[*] Auxiliary module execution completed

Burp Intruder

Burp Suite and OWASP ZAP both include capabilities beyond a simple proxy that are invaluable during web application assessments — most notably web fuzzers and automated scanners. The integrated fuzzing engines let you perform enumeration, input manipulation, and brute-force attacks directly from the proxy interface, often replacing many command-line fuzzers such as ffuf, dirbuster, gobuster, or wfuzz for certain workflows.

Burp’s fuzzer, Burp Intruder, can target pages, directories, subdomains, parameter names, parameter values, and a wide variety of other request components. Compared with many CLI fuzzers it offers far more advanced features and fine-grained control. Note, however, that the Community (free) edition of Burp limits Intruder to about one request per second, which makes it impractically slow for large-scale fuzzing where CLI tools can issue thousands of requests per second. For quick, focused checks the free Intruder is fine, but for high-throughput scanning the throttling becomes a bottleneck.

The Professional edition removes that speed restriction and exposes additional Intruder features, allowing performance comparable to dedicated fuzzers while keeping Intruder’s powerful payload processing, insertion point management, and result-analysis tools. That combination — flexibility, advanced payload handling, and high throughput — is what makes Burp Intruder one of the strongest choices for web fuzzing and brute-force tasks.

In the following section we’ll walk through practical examples of using Burp Intruder for enumeration and fuzzing, covering common setups, payload strategies, and tips for analysing results effectively.

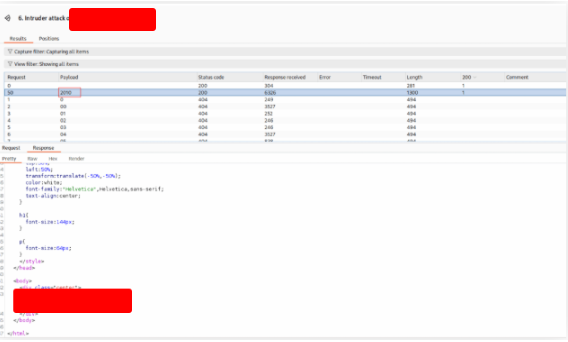

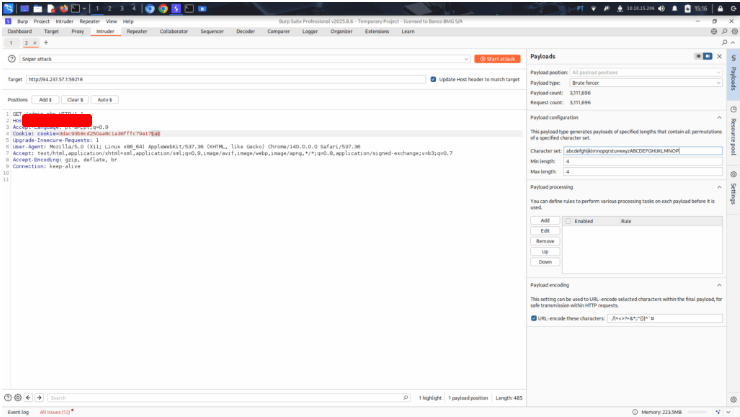

Use Burp Intruder to fuzz for ‘.html’ files under the /admin directory, to find a file containing the flag.

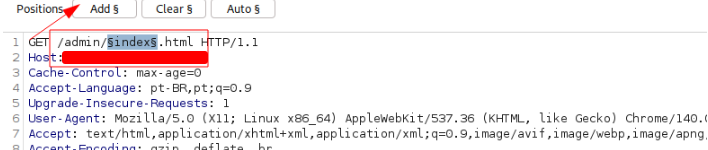

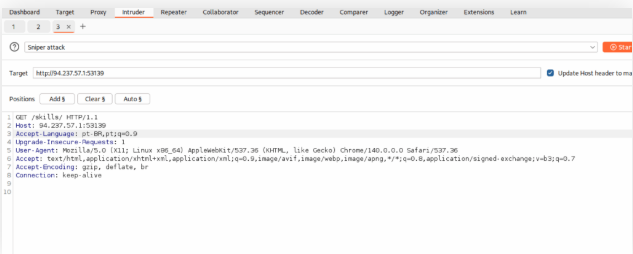

Open the page you want to intercept in Burp. Right-click it and choose Send to Intruder.

Make the GET request as /admin/index.html. Select the word index and click on Add button.

On Payload tab, select Simple list. Select the list

/opt/useful/seclists/Discovery/Web-Content/common.txt

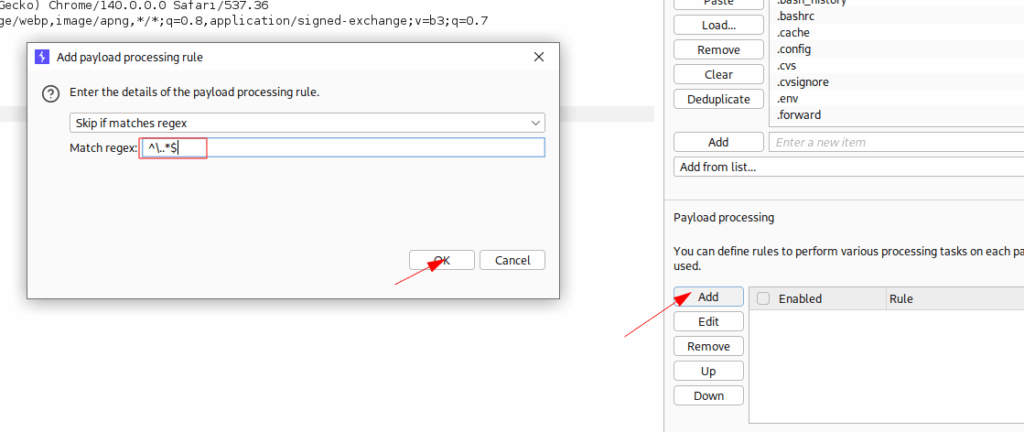

On the Payload Processing, type ^\..*$ in the field and click on Add button.

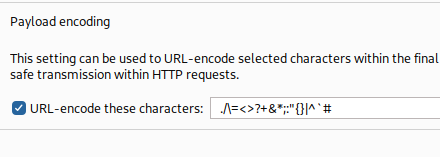

Select the option URL-encode these characters

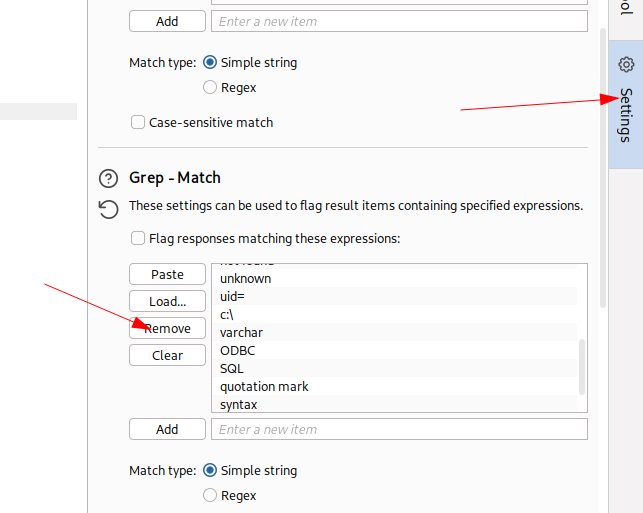

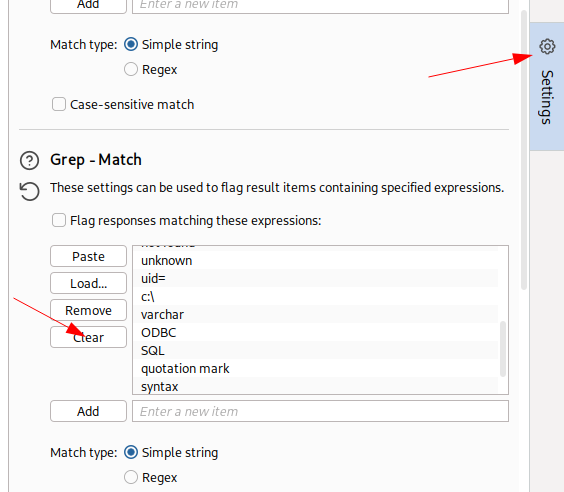

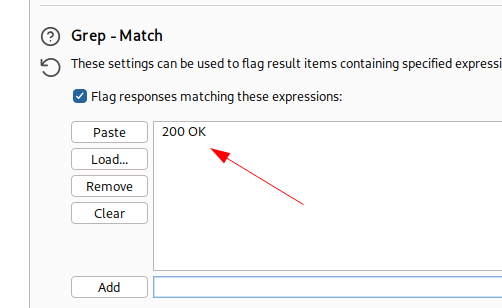

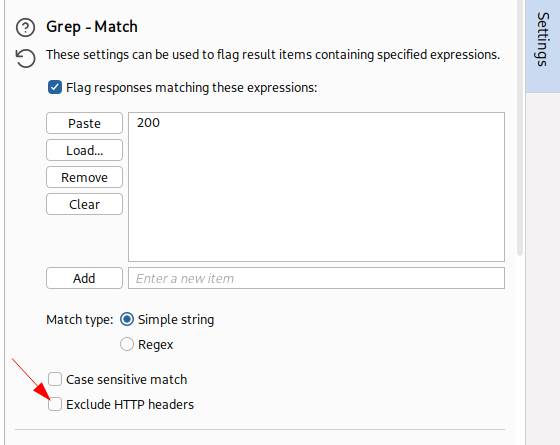

In the tab Setting, remove all string on the field Grep-Match and add the string 200.

Don’t forget to unselect the field Exclude HTTP headers

Start the attack and get the flag.

ZAP Fuzzer

ZAP’s built-in fuzzing engine — simply called ZAP Fuzzer — is a capable tool for exercising and probing web endpoints. While it doesn’t offer the same depth of payload processing and advanced insertion-point controls you get with Burp Intruder, ZAP Fuzzer shines where raw throughput and flexibility matter: it imposes no artificial throttling, so you can drive a large number of requests quickly without hitting a built-in rate limit. That makes it particularly useful for high-volume enumeration, brute-force attempts, or any situation where you need speed rather than the fine-grained manipulation Intruder provides.

Functionally, ZAP Fuzzer covers the common fuzzing use cases: you can target URLs, request parameters, headers, bodies, and file uploads; feed it custom payload lists or generate payloads on the fly; and inspect responses to find anomalies, crashes, or interesting status codes. Because it lacks some of Intruder’s advanced features — for example, sophisticated payload processing macros, built-in comparisons, or the same level of insertion-point automation — you may need to combine ZAP Fuzzer with external tools or pre-process your payloads to replicate certain Intruder workflows. Still, its open approach and lack of throttling make it a strong option for rapid discovery.

In this section we’ll reproduce the same experiments we ran with Burp Intruder so you can compare results directly. We’ll cover how to configure ZAP Fuzzer for common tasks (directory and parameter fuzzing, authentication-aware fuzzing, and multi-threaded runs), tips for managing high-volume output (logging, response filtering, and automation hooks), and guidance on when to pick ZAP Fuzzer over Burp Intruder depending on your goals: speed and scale versus advanced payload handling and analysis.

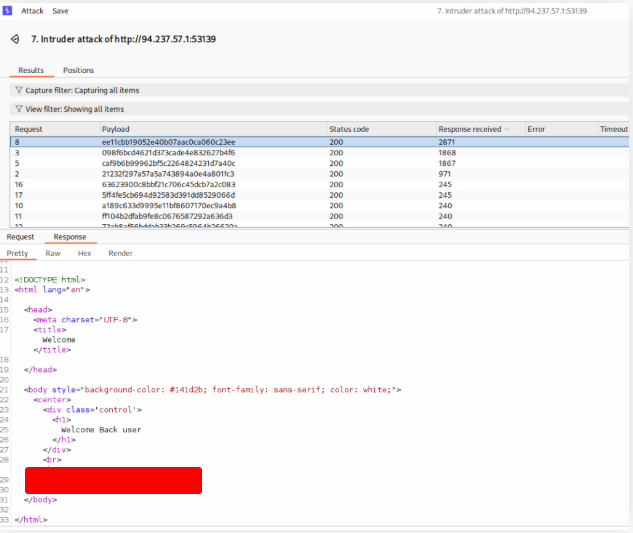

The directory we found above sets the cookie to the md5 hash of the username, as we can see the md5 cookie in the request for the (guest) user. Visit ‘/skills/’ to get a request with a cookie, then try to use ZAP Fuzzer to fuzz the cookie for different md5 hashed usernames to get the flag. Use the “top-usernames-shortlist.txt” wordlist from Seclists.

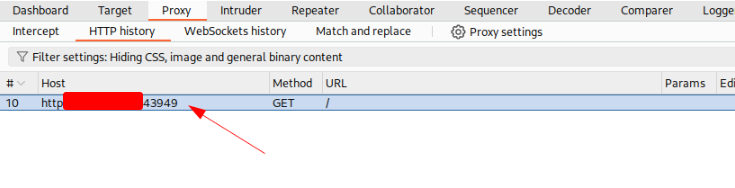

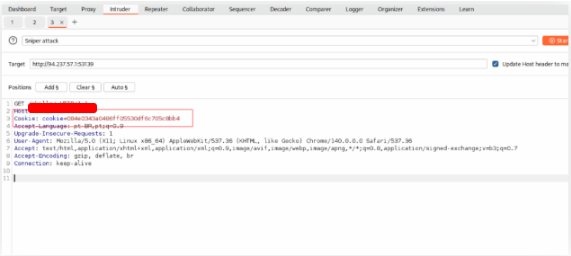

Open the page on your browser and intercept the request.

http://127.0.0.1:53139/skills/

Right click on the request and choose Send to Intruder

Copy the cookie inside de request.

Select the coockie and click on Add button. Select the list.

/usr/share/seclists/Usernames/top-usernames-shortlist.txt

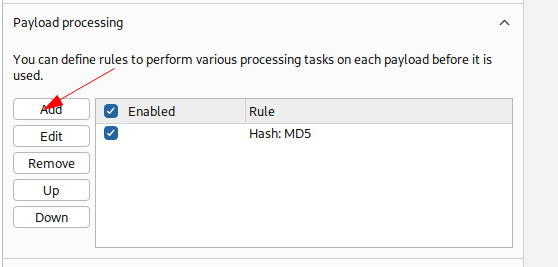

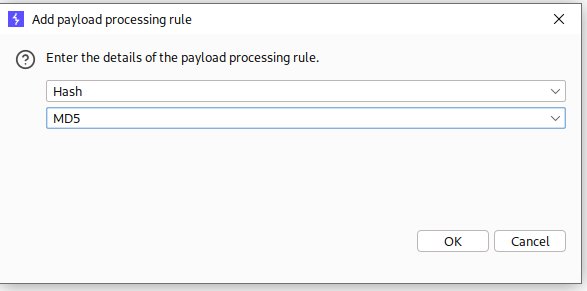

On the payload processing, convert the cookie to md5

Start the attack and get the flag.

ZAP Scanner

OWASP ZAP includes a built-in Web Scanner that parallels the functionality of tools like Burp Scanner. Using ZAP’s spidering and crawling capabilities (including the traditional Spider and the AJAX Spider), ZAP can build a site map of target applications and then run both passive and active scanning engines to look for a wide range of security issues.

- Site mapping: ZAP discovers pages, endpoints, parameters, and resources by crawling the app. This map is the foundation for any automated scan and helps you target only relevant areas.

- Passive scanning: Runs while you browse or proxy traffic through ZAP and flags potential issues without altering requests. It finds problems detectable from responses alone (e.g., missing security headers, cookie flags, information leakage) and is safe to run against production because it doesn’t modify state.

- Active scanning: Sends crafted requests and payloads to probe for exploitable vulnerabilities (e.g., SQL injection, command injection, XSS). Active scans are more intrusive and can change data or trigger side effects, so they should be used carefully in non-production or with explicit permission.

Practical notes:

- Use authenticated scans (configure ZAP’s authentication and session handling) to reach protected areas and test logic that only appears after login.

- Tune scan policies and strength to balance coverage and safety—high-strength active scans can be noisy and may cause disruptions.

- Review and triage results manually: automated scanners generate false positives, so correlate findings with request/response evidence and exploitability context.

- Leverage ZAP scripting, scan rules, and add-ons to extend detection capabilities or tailor the scanner to the app’s tech stack.

- Exportable reports (HTML, JSON, XML) and integrations with CI/CD pipelines make ZAP convenient for continuous testing and repeatable security checks.

In short, ZAP Scanner is a capable automated testing component that, when combined with proper site mapping, authenticated sessions, and tuned scan policies, becomes a valuable part of a web application security testing workflow.

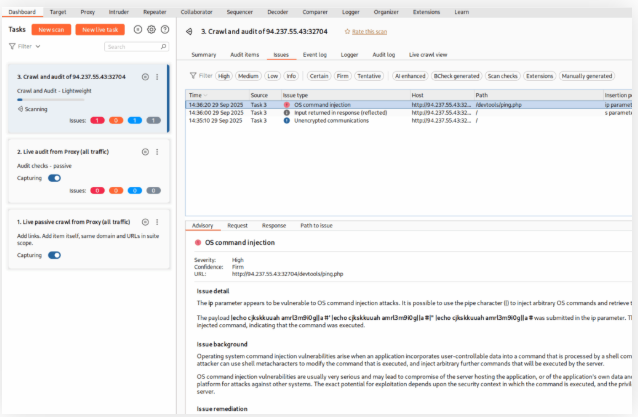

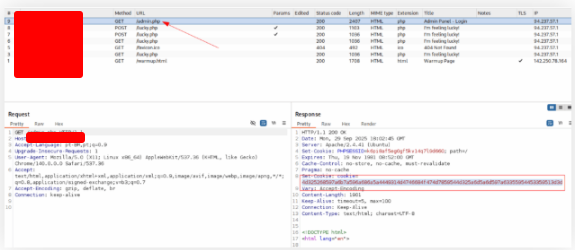

Run ZAP Scanner on the target above to identify directories and potential vulnerabilities. Once you find the high-level vulnerability, try to use it to read the flag at ‘/flag.txt’

I don’t like ZAP, so I use Burp Suite. Start Burp Crawl & Audit. Wait until it get’s the vulnerability

Burp found the vulnerability and told me that the parameter is ip. Sow, I search for the flag.txt file.

And read this file to get the value of the flag.

Skills Assessment – Using Web Proxies

For each scenario I list: Goal → Best feature(s) → Why → Practical steps (concise, copy-pasteable). Use Burp when you need advanced payload processing, deep request/response analysis and exploit tooling; use ZAP when you want no-throttle high throughput fuzzing, quick automated scans, or easier CI integration.

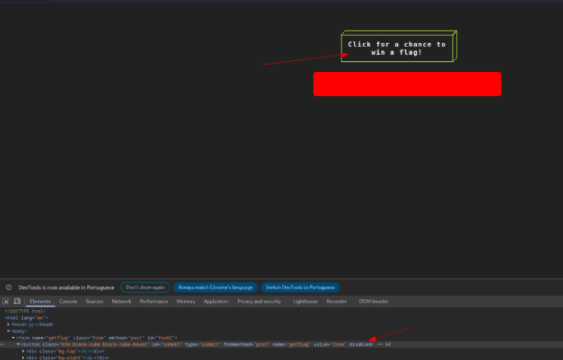

The /lucky.php page has a button that appears to be disabled. Try to enable the button, and then click it to get the flag.

Just open the site. Right-click, choose inspector. Inspect the button, erase de text disabled from the button and click on it.

The /admin.php page uses a cookie that has been encoded multiple times. Try to decode the cookie until you get a value with 31-characters. Submit the value as the answer.

Open the page and copy the cookie.

Insert the cookie in the Decoder Tab and decode as bellow.

Once you decode the cookie, you will notice that it is only 31 characters long, which appears to be an md5 hash missing its last character. So, try to fuzz the last character of the decoded md5 cookie with all alpha-numeric characters, while encoding each request with the encoding methods you identified above. (You may use the “alphanum-case.txt” wordlist from Seclist for the payload)

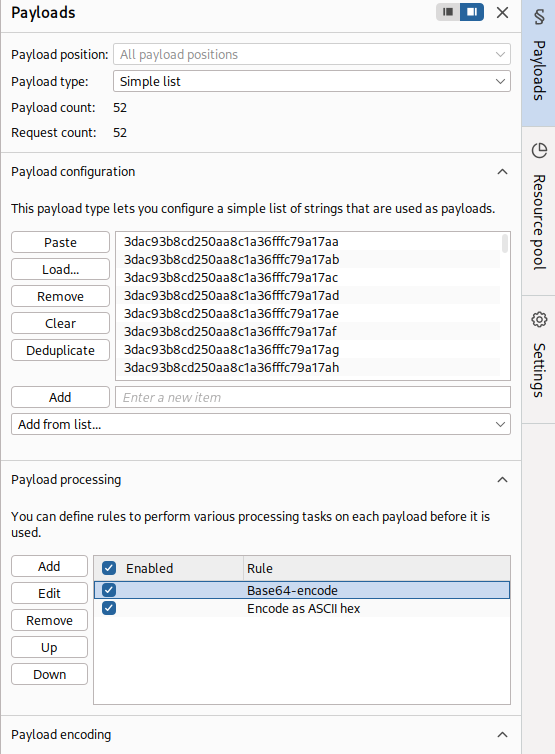

Use the script to generate the list of strings

#!/usr/bin/env python3

import base64

import binascii

import string

from pathlib import Path

import sys

input_str = "3dac93b8cd250aa8c1a36fffc79a17ax"

placeholder = "x"

output_file = "payloads_x_substituted.txt"

letters = string.ascii_lowercase + string.ascii_uppercase # a-zA-Z

out_path = Path(output_file)

with out_path.open("w", encoding="utf-8") as f:

for ch in letters:

candidate = input_str.replace(placeholder, ch)

f.write(candidate + "\n")

print(f"Gerados {len(letters)} payloads em '{out_path}'.")

with out_path.open("r", encoding="utf-8") as f:

for i, line in enumerate(f):

if i >= 5:

break

print(f"{i+1}. {line.strip()}")

After import the list, enode the string in this order.

Start the attack and get the flag.

You are using the ‘auxiliary/scanner/http/coldfusion_locale_traversal’ tool within Metasploit, but it is not working properly for you. You decide to capture the request sent by Metasploit so you can manually verify it and repeat it. Once you capture the request, what is the ‘XXXXX’ directory being called in ‘/XXXXX/administrator/..’?

Configure the exploit using msfconsole and intercept using Burp Suite

[*] Auxiliary module execution started - auxiliary/scanner/http/coldfusion_locale_traversal

[*] Running against: target.com:8500

[*] Sending HTTP request to http://target.com/CFIDE/administrator/..

---- RAW HTTP REQUEST (as seen in Burp) ----

GET /[REDACTED]/administrator/.. HTTP/1.1

Host: target.com:8500

User-Agent: Mozilla/5.0 (compatible; MSF)

Accept: */*

Connection: Close

---- END REQUEST ----

[-] target.com:8500 - No vulnerability detected (HTTP/1.1 200 OK)

[*] Auxiliary module execution completed