The Architecture of Linux: A Deep Dive

Linux, as a ubiquitous operating system, is the foundation for everything from personal workstations and massive enterprise servers to sophisticated embedded and mobile devices. In the field of information security, Linux holds a paramount position, celebrated for its inherent robustness, flexibility, stability, and open-source methodology. This section is dedicated to exploring the core mechanics of Linux, encompassing its history, underlying philosophy, architectural layers, key components, and the crucial File System Hierarchy—all of which represent indispensable knowledge for any aspiring or practicing cybersecurity professional. Consider this initial exploration as gaining the necessary theoretical knowledge before sitting behind the wheel of a complex, high-performance vehicle—understanding its fundamental systems and design principles.

Defining the Operating System

At its simplest, Linux is an operating system (OS), performing the same essential role as Windows, macOS, or Android. An OS is a vital piece of system software that efficiently manages a computer’s hardware resources, acting as the critical intermediary that facilitates smooth communication between all software applications and the physical hardware components. What distinguishes Linux is its multitude of versions, referred to as distributions (or “distros”). These are distinct, complete versions of the OS, often tailored and bundled for specific user needs, environments, or philosophical preferences.

A Brief Historical Context

The road to the modern Linux OS is marked by several pivotal developments. The story begins with the creation of the Unix operating system in 1970 by Ken Thompson and Dennis Ritchie at AT&T Bell Labs. Unix’s architecture and design principles laid the foundational blueprint. Subsequent to this, the Berkeley Software Distribution (BSD) emerged in 1977. However, its development faced constraints due to a legal conflict involving the Unix code owned by AT&T.

A major shift occurred in 1983 when Richard Stallman launched the GNU Project. His ambitious goal was to develop a comprehensive, free (as in freedom) Unix-like operating system. This effort resulted in the creation of crucial software components, including the GNU General Public License (GPL), a licensing framework central to the open-source movement. Despite these massive efforts, a fully functional, free kernel—the core part of the OS—remained elusive until a Finnish computer science student took on the challenge.

In 1991, Linus Torvalds initiated what was originally a personal project to develop a new, free kernel. This creation became known as the Linux kernel. Over the decades, this kernel has evolved spectacularly, growing from a minimal collection of C-language files under a restrictive license to its current version, which contains tens of millions of source code lines and is universally licensed under the GNU General Public License v2.

Today, the Linux ecosystem is vast, boasting well over 600 active distributions, each built upon the Linux kernel and its supporting libraries. Prominent examples include Ubuntu, Debian, Fedora, openSUSE, Red Hat Enterprise Linux, and Linux Mint.

Security, Performance, and Open Source

Linux maintains a strong reputation for superior security compared to many proprietary operating systems. While the kernel has had its share of past vulnerabilities, their frequency is continually decreasing. It exhibits a lower susceptibility to common malware and benefits from a model of highly frequent, community-driven updates. Beyond security, Linux is celebrated for its stability and capability to deliver very high performance to end-users and servers alike. Nevertheless, it can present a steeper learning curve for novice users, and its hardware driver support may occasionally be less comprehensive than that of dominant desktop operating systems.

The core principle of free and open-source software means that anyone is permitted to study, modify, and distribute the source code for commercial or non-commercial purposes. This collaborative model is a primary source of its strength and innovation. Linux-based systems power an enormous variety of devices, including massive web servers, supercomputers, desktops, and a vast range of embedded devices (routers, smart TVs, gaming consoles). Importantly, the dominant mobile OS, Android, is built upon the Linux kernel, cementing Linux’s status as the most widely installed operating system globally.

The Linux Philosophy: Simplicity and Cooperation

The fundamental design philosophy of the Linux environment revolves around simplicity, modularity, and transparency. This approach champions the idea of developing many small, specialized programs (tools) where each one is designed to perform one single task exceptionally well. The power of the system comes from the ability to seamlessly combine and chain these tools in numerous ways to achieve highly complex and sophisticated operations, maximizing efficiency and adaptability.

This philosophy is codified in several core principles:

| Principle | Description |

| Everything is a File | A fundamental concept stating that all components, including configuration data, hardware devices, and running services, are represented by a file or a directory within the filesystem. |

| Small, Single-Purpose Programs | Emphasizes the creation of lightweight, focused utilities that do one job perfectly. |

| Ability to Chain Programs | Tools can be integrated and piped together to execute extensive and complex workflows, such as advanced data processing or filtering. |

| Avoid Captive User Interfaces | Prioritizes the command-line interface (CLI) or shell, which grants users the highest possible level of control and scripting capability over the system. |

| Configuration Data in Text Files | System and application settings are predominantly stored in easily readable and editable text files, such as the /etc/passwd file for system users. |

Key System Components

A functional Linux system is composed of several critical, interacting parts:

| Component | Description |

| Bootloader | The initial code that executes upon system startup, responsible for loading the operating system kernel into memory. (e.g., GRUB). |

| OS Kernel | The heart of the OS; it manages hardware resources, including CPU scheduling, memory allocation, and I/O device access. |

| Daemons | Background services that run without direct user interaction. They manage essential operations like network connectivity, printing, and logging. |

| OS Shell (Command Line) | The language interpreter (CLI) that acts as the user’s direct interface to the kernel, allowing commands to be executed and scripts to be run. (Popular shells include Bash, Zsh, and Fish). |

| Graphics Server | A subsystem (often called “X” or “X-server”) that enables graphical programs to run, whether locally or across a network (X-Windowing System). |

| Window Manager / Desktop Environment | Provides the Graphical User Interface (GUI), including features like windows, icons, and menus. Popular examples are GNOME, KDE, and Cinnamon. |

| System Utilities | Applications and programs that perform specific functions for the user or support other system processes. |

The Layered Architecture

The Linux operating system is conceptually organized into a series of distinct layers, starting from the physical hardware and extending outward to the user applications:

- Hardware Layer: The physical foundation, including the CPU, RAM, hard drives, and all peripheral devices.

- Kernel Layer: The core control layer. Its function is to virtualize and manage the hardware resources, preventing conflicts and allocating virtual resources (CPU time, memory) to different processes.

- Shell Layer (CLI/GUI): The interface layer. This is the command-line interface or graphical interface through which the user interacts with the system and issues commands to the kernel.

- System Utility Layer: The outermost layer, which comprises the applications and tools that deliver the full range of the operating system’s functionality to the end-user.

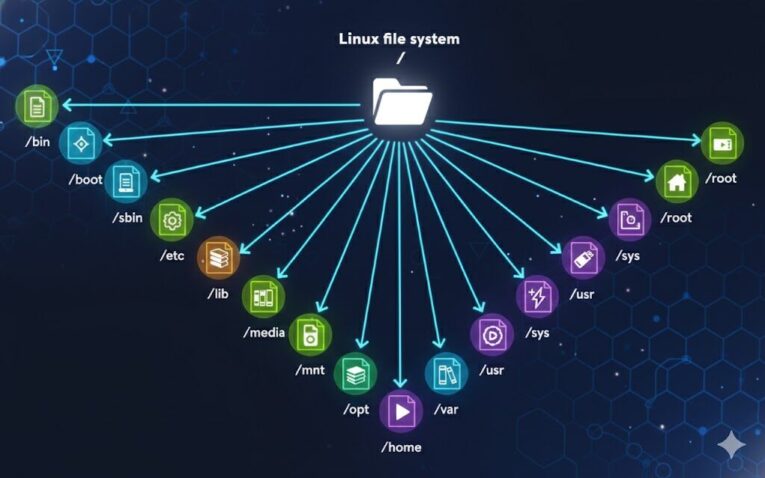

The File System Hierarchy (FHS)

Unlike operating systems that use multiple “drives,” Linux employs a single, unified tree-like directory structure defined by the Filesystem Hierarchy Standard (FHS). All files and directories branch out from a single root point, designated by the forward slash (/).

| Path | Description |

| / | The Root Directory. It is the top-level directory containing all other directories and the files necessary to boot the system before other filesystems are mounted. |

| /bin | Essential binaries (executable programs) required for basic system operation. |

| /boot | Contains files required for the boot process, including the static bootloader and the kernel executable. |

| /dev | Contains special device files that represent hardware components attached to the system. |

| /etc | Stores local system-wide configuration files for the OS and installed applications. |

| /home | The parent directory for individual user home directories (e.g., /home/username). |

| /lib | Essential shared libraries needed by the binaries in /bin and /sbin. |

| /media | Standard mount point for external, removable media (like USB drives and optical discs). |

| /mnt | A temporary mount point used for mounting general filesystems. |

| /opt | Used for storing optional application software packages, typically third-party or supplementary tools. |

| /root | The home directory specifically for the root superuser (separate from /home). |

| /sbin | Essential system administration binaries (executables) used for system maintenance and booting. |

| /tmp | A directory for temporary files used by the OS and applications. Contents are often cleared upon system reboot. |

| /usr | Contains non-essential, read-only data, including user binaries, libraries, and documentation (e.g., man pages). |

| /var | Stores variable data that is expected to change frequently, such as log files, mail spool files, and web server data. |

Com certeza! Aqui está o texto reescrito e expandido em inglês, com as referências alteradas para que não pareça ter sido feito por uma fonte específica.

🐧 The Diverse World of Linux Operating Systems

Linux-based Operating Systems, often referred to simply as “distributions” or “distros,” are comprehensive software packages built upon the foundational Linux kernel. They represent fully functional operating systems designed for an enormous range of applications, spanning from vast enterprise servers and small, embedded devices to modern desktop workstations and even mobile platforms.

One of the most compelling aspects of the Linux ecosystem is its diversity. Imagine a global collection of independent entities, each starting with the same core, high-performance engine (the Linux kernel) but evolving in unique directions to cater to specific user bases and technical requirements. While they all adhere to the same underlying architecture and open-source principles, each distribution provides its own curated selection of software packages, configuration settings, and graphical user interfaces (GUIs), delivering a tailored experience for diverse needs.

Key Applications and Popular Choices

Linux’s versatility means it is utilized across virtually all segments of modern computing:

- 🌐 Servers: Due to its inherent stability, robust security features, and efficient resource management, Linux is the dominant choice for web servers, database systems, and network infrastructure worldwide.

- 💻 Desktop Computing: Many users select a Linux distribution for their personal computers because it is generally free of charge, open-source, and highly flexible, allowing for deep customization of the look and feel.

- ☁️ Cloud Computing & Virtualization: Linux forms the backbone of most public and private cloud environments.

- 📱 Embedded Systems: From smart appliances to industrial control systems, lightweight distros power countless specialized devices.

Some of the most widely adopted and influential distributions include:

- Ubuntu

- Fedora

- Debian

- Red Hat Enterprise Linux (RHEL)

- CentOS

For newcomers to the Linux environment, distributions like Ubuntu and Fedora are frequently recommended due to their user-friendly installation processes and active community support.

🔒 Linux in Cybersecurity and Professional Use

In the field of cybersecurity, system administrators, penetration testers, and security analysts often show a strong preference for Linux. The open-source nature of the kernel and the surrounding utility software is a major advantage, as it allows security professionals to scrutinize the source code for vulnerabilities, understand its inner workings completely, and customize the operating system with precision. This deep level of control enables them to strip down the OS to only essential components or configure it meticulously for highly specific, secure testing environments.

A distinct category of distributions has been developed explicitly for security-related tasks, providing pre-installed suites of tools for everything from digital forensics to penetration testing. These specialized systems include:

- Kali Linux (The most popular choice, known for its extensive arsenal of pre-configured security tools.)

- Parrot Security OS

- BlackArch

- BackBox

The primary differences between standard and specialized distributions lie in the pre-installed software packages, the default desktop environment, and the configuration of available tools. For instance, while Ubuntu is exceptionally popular for general desktop use, and Debian is a common choice for stable server deployments, systems like RHEL and CentOS are optimized for mission-critical, enterprise-level operations.

🛠️ A Deep Dive into Debian

Debian stands as a foundational and highly respected Linux distribution, earning a reputation for its rock-solid stability and unwavering reliability. It serves a wide array of roles, from personal desktop machines to robust servers and specialized embedded applications.

Package Management and Stability

Debian employs the sophisticated Advanced Package Tool (APT) system for managing software. This system is critical for handling the installation, removal, and most importantly, the automatic application of security patches and software updates. APT is designed to keep the system current and protected by efficiently managing dependencies and securely fetching updates from repositories. This process can be executed manually or configured for automatic scheduling.

One of Debian’s greatest strengths is its commitment to long-term stability. It offers Long-Term Support (LTS) releases that guarantee security updates and maintenance for extended periods, sometimes up to five years. This extended support is vital for server environments and industrial systems that demand uninterrupted, 24/7 operation. While any complex system may face security challenges, the dedicated Debian development community is renowned for its swift response in creating and releasing security advisories and patches.

Customization and Control

While Debian is celebrated for its performance, it may present a steeper learning curve for absolute beginners compared to highly abstracted distributions. The initial configuration and setup can be more involved, but this complexity translates directly into unparalleled flexibility and control over the operating system.

For experienced and advanced users, this level of control is highly beneficial. Gaining a deeper understanding of the system’s underlying mechanisms and configuration files allows for highly optimized and bespoke system environments. It is a common professional observation that while initially more time is invested in learning the core commands and configuration logic, this knowledge ultimately leads to significant time savings and greater operational efficiency compared to struggling with simplified interfaces that mask complexity.

In summary, Debian is a versatile, dependable platform whose stability, reliability, and robust commitment to security make it an excellent choice for a wide spectrum of computing needs, including demanding tasks within the cybersecurity domain.

🖥️ Unlocking Control: An Exploration of the Command-Line Interface

Understanding and utilizing the Linux command-line interface (CLI) is a foundational skill for anyone working in technology, especially those focused on server administration and cybersecurity. Given the prevalence of Linux-based systems in critical infrastructure—such as the vast majority of web servers—mastering its control mechanisms is essential. Linux is often preferred over other server operating systems, like Windows Server, due to its reputation for greater stability and fewer errors in long-term operation.

To effectively control and manage these robust systems, one must become proficient with the core component: the Shell.

The Shell and the Console

A Linux terminal, frequently referred to as a console or shell interface, provides a text-based Input/Output (I/O) channel for a user to directly communicate with the operating system’s kernel. The kernel is the central component that manages the system’s hardware and processes.

The Shell itself acts as the interpreter or command processor. You can conceptually view the shell as a highly powerful, text-based alternative to a Graphical User Interface (GUI). Instead of clicking icons, you type commands to execute actions, such as navigating the directory structure, manipulating files, launching applications, and querying system status. The shell offers significantly greater capability and speed for complex system administration tasks and bulk operations compared to a typical GUI.

The term “console” typically refers to the traditional, full-screen text-mode environment, whereas the “terminal” often refers to the windowed application used on a graphical desktop to access the shell.

🌐 Accessing the Core: Terminal Emulators

A terminal emulator is a software application designed to mimic the functionality of a physical terminal within a graphical desktop environment (GUI). This software allows users to run text-based programs and interact with the operating system’s shell while still utilizing their mouse, windows, and other graphical features.

A Powerful Analogy

Consider the Kernel as the brain of a large, high-security facility, responsible for all operations and resources.

- The Shell is the sophisticated internal language or operating protocol this brain understands.

- The Terminal (or Console) is the dedicated communication station inside the facility where authorized personnel can speak this language (enter commands) and receive direct, unfiltered responses.

- A Terminal Emulator is the secure remote access software that allows an administrator, seated miles away, to open a window on their desktop (the GUI) that behaves exactly like that communication station, enabling them to send instructions (commands) to the Shell, which then communicates with the Kernel.

Additionally, some utilities, known as terminal multiplexers (like Tmux or Screen), allow users to divide a single terminal window into multiple independent sessions or Command-Line Interfaces (CLIs). This capability is like having several secure communication lines open simultaneously on one screen, allowing you to manage different tasks, directories, or remote systems concurrently without needing to open numerous individual emulator windows. These multiplexers greatly enhance productivity by providing organized workspaces and session persistence.

📜 The Heart of the CLI: The Bourne-Again Shell (BASH)

The most dominant and widely adopted shell on Linux systems is the Bourne-Again Shell (BASH), which is a core part of the GNU Project. Virtually every task that can be performed through the graphical interface can be executed via the BASH shell.

The shell’s true power lies in its ability to facilitate interaction with system programs and processes with unmatched speed and efficiency. Furthermore, BASH is a powerful scripting environment, allowing users to write simple or complex shell scripts to automate repetitive administrative and operational tasks, significantly reducing manual effort.

While BASH is the default for most distributions, other popular shell interpreters also exist, each with its own features, syntax quirks, and use cases:

- Zsh (Z Shell): Known for its powerful customization and advanced features, often enhanced by frameworks like Oh My Zsh.

- Ksh (Korn Shell): An older, highly robust shell often favored in corporate and legacy Unix environments.

- Tcsh/Csh: Known for its C-like syntax.

- Fish (Friendly Interactive Shell): Designed with a focus on being user-friendly with features like syntax highlighting and autosuggestions.