This module provides learners with the core skills needed for web reconnaissance, a crucial phase in ethical hacking and penetration testing. Students explore both active and passive techniques to gather intelligence about web targets safely and effectively.

Key topics covered include:

- DNS enumeration: Mapping domain names to IP addresses and uncovering subdomains.

- Web crawling: Systematically exploring websites to identify content, links, and potential vulnerabilities.

- Analysis of web archives and HTTP headers: Discovering historical versions of sites and extracting server information.

- Fingerprinting web technologies: Identifying the frameworks, libraries, and platforms that power web applications.

By the end of this module, learners gain the ability to collect actionable intelligence, understand the web attack surface, and prepare for more advanced penetration testing exercises.

Utilising WHOIS

Perform a WHOIS lookup against the paypal.com domain. What is the registrar Internet Assigned Numbers Authority (IANA) ID number?

┌──(alex㉿htd)-[~]

└─$ whois paypal.com

Domain Name: PAYPAL.COM

Registry Domain ID: 8017040_DOMAIN_COM-VRSN

Registrar WHOIS Server: whois.markmonitor.com

Registrar URL: http://www.markmonitor.com

Updated Date: 2024-10-08T21:00:07Z

Creation Date: 1999-07-15T05:32:11Z

Registry Expiry Date: 2025-07-15T05:32:11Z

Registrar: MarkMonitor Inc.

Registrar IANA ID: [REDACTED]

Registrar Abuse Contact Email: abusecomplaints@markmonitor.com

Registrar Abuse Contact Phone: +1.2086851750

Domain Status: clientDeleteProhibited https://icann.org/epp#clientDeleteProhibited

Domain Status: clientTransferProhibited https://icann.org/epp#clientTransferProhibited

Domain Status: clientUpdateProhibited https://icann.org/epp#clientUpdateProhibited

Domain Status: serverDeleteProhibited https://icann.org/epp#serverDeleteProhibited

Domain Status: serverTransferProhibited https://icann.org/epp#serverTransferProhibited

Domain Status: serverUpdateProhibited https://icann.org/epp#serverUpdateProhibited

Name Server: NS1.P57.DYNECT.NET

Name Server: NS2.P57.DYNECT.NET

Name Server: PDNS100.ULTRADNS.NET

Name Server: PDNS110.ULTRADNS.NET

DNSSEC: signedDelegation

DS Data: 34800 13 2 D9E64BABC8718F09D3B596F9D1099D4C47C3F595731A2101DFCEE5DDD428C08F50

DS Data: 7037 13 2 9797EAFPF96889BED549795DEA4A0613F189A9F7AC80D794F7DDFECA9A190B

URL of the ICANN Whois Inaccuracy Complaint Form: https://www.icann.org/wicf/

>>> Last update of whois database: 2025-05-06T11:57:40Z <<<

What is the admin email contact for the tesla.com domain (also in-scope for the Tesla bug bounty program)?

Digging DNS

Now that we have a solid grasp of DNS fundamentals and the different types of DNS records, it’s time to move into the practical side of things. In this section, we’ll explore the tools and techniques that leverage DNS for web reconnaissance, showing how attackers—and ethical hackers—can gather valuable intelligence about a target’s infrastructure.

Whether it’s mapping subdomains, identifying mail servers, or uncovering hidden services, understanding how to use DNS effectively is a critical skill in any web penetration testing workflow.

Which IP address maps to example.com?

┌──(alex㉿htd)-[~]

└─$ nslookup example.com

Server: 192.168.15.1

Address: 192.168.15.1#53

Non-authoritative answer:

Name: example.com

Address: [REDACTED]

Name: example.com

Address: 2a03:b0c0:1:e0::32c:b001

Which domain is returned when querying the PTR record for 134.209.24.248?

┌──(alex㉿htd)-[~]

└─$ dig -x 134.209.24.248

; <<>> DiG 9.20.4-2~Debian <<>> -x 134.209.24.248

;; global options: +cmd

;; Got answer:

;; ->>HEADER<<- opcode: QUERY, status: NOERROR, id: 2899

;; flags: qr rd ra; QUERY: 1, ANSWER: 1, AUTHORITY: 0, ADDITIONAL: 1

;; OPT PSEUDOSECTION:

; EDNS: version: 0, flags:; udp: 512

;; QUESTION SECTION:

;248.24.209.134.in-addr.arpa. IN PTR

;; ANSWER SECTION:

248.24.209.134.in-addr.arpa. 1800 IN PTR [REDACTED].

;; Query time: 171 msec

;; SERVER: 192.168.15.1#53(192.168.15.1) (UDP)

;; WHEN: Tue May 06 09:54:45 -03 2025

;; MSG SIZE rcvd: 87

What is the full domain returned when you query the mail records for facebook.com?

┌──(alex㉿htd)-[~]

└─$ dig +short MX facebook.com

10 [REDACTED]

Subdomain Bruteforcing

Subdomain brute-force enumeration is a highly effective active technique for discovering hidden subdomains of a target domain. This method relies on predefined wordlists containing potential subdomain names, which are systematically tested against the target domain to identify valid entries.

By using carefully curated wordlists, penetration testers can dramatically improve both the efficiency and success rate of their subdomain discovery, uncovering assets that may otherwise remain hidden and potentially exposing additional attack surfaces.

Using the known subdomains for example.com (www, ns1, ns2, ns3, blog, support, customer), find any missing subdomains by brute-forcing possible domain names. Provide your answer with the complete subdomain, e.g., www.example.com.

┌──(alex㉿htd)-[~]

└─$ gobuster dns -d example.com -w /usr/share/seclists/Discovery/DNS/subdomains-top1million-110000.txt

Gobuster v3.6

by OJ Reeves (@TheColonial) & Christian Mehlmauer (@firefart)

[+] Domain: example.com

[+] Threads: 10

[+] Timeout: 1s

[+] Wordlist: /usr/share/seclists/Discovery/DNS/subdomains-top1million-110000.txt

===============================================================

Starting gobuster in DNS enumeration mode

===============================================================

Found: ns1.example.com

Found: www.example.com

Found: ns2.example.com

Found: ns3.example.com

Found: blog.example.com

Found: support.example.com

Found: [REDACTED]

Found: customer.example.com

===============================================================

DNS Zone Transfers

While brute-forcing subdomains can be effective, there’s a less intrusive and often more efficient method: DNS zone transfers.

Originally designed to replicate DNS records between authoritative name servers, a misconfigured zone transfer can unintentionally reveal a complete list of subdomains and DNS entries. For penetration testers, this misconfiguration can become a treasure trove of information, allowing the discovery of subdomains without generating large amounts of traffic or triggering alarms.

After performing a zone transfer for the domain example.htd on the target system, how many DNS records are retrieved from the target system’s name server? Provide your answer as an integer, e.g, 123.

$ dig axfr @127.0.0.1 example.htd

; <<>> DiG 9.20.9-1-Debian <<>> axfr @127.0.0.1 example.htd

;; (1 server found)

[REDACTED]

;; SERVER: 127.0.0.1#53(127.0.0.1) (TCP)

;; WHEN: Fri Aug 29 18:09:26 -03 2025

;; XFR size: 22 records (messages 1, bytes 594)

Within the zone record transferred above, find the ip address for ftp.admin.example.htd. Respond only with the IP address, eg 127.0.0.1

$ dig axfr @127.0.0.1 example.htd

; <<>> DiG 9.20.9-1-Debian <<>> axfr @127.0.0.1 example.htd

;; global options: +cmd

example.htd. 604800 IN SOA example.htd. root.example.htd. 2 604800 86400 2419200 604800

example.htd. 604800 IN NS ns.example.htd

example.htd 604800 IN A 127.0.0.1

ftp.admin.example.htd. 604800 IN A [REDACTED]

careers.example.htd 604800 IN A 127.0.0.1

dc1.example.htd. 604800 IN A 127.0.0.1

dc2.example.htd. 604800 IN A 127.0.0.1

internal.example.htd 604800 IN A 127.0.0.1

admin.internal.example.htd. 604800 IN A 127.0.0.1

wsus.internal.example.htd. 604800 IN A 127.0.0.1

dir.example.htd. 604800 IN A 127.0.0.1

dev.ir.example.htd. 604800 IN A 127.0.0.1

ns.example.htd. 604800 IN A 127.0.0.1

resources.example.htd. 604800 IN A 127.0.0.1

securemessaging.example.htd. 604800 IN A 127.0.0.1

test1.example.htd. 604800 IN A 127.0.0.1

us.example.htd. 604800 IN A 127.0.0.1

cluster1.us.example.htd. 604800 IN A 127.0.0.1

messagcenter.us.example.htd. 604800 IN A 127.0.0.1

ww02.example.htd. 604800 IN A 127.0.0.1

www1.example.htd. 604800 IN A 127.0.0.1

example.htd. 604800 IN SOA example.htd. root.example.htd. 2 604800 86400 2419200 604800

;; Query time: 136 msec

;; SERVER: 127.0.0.1#53(127.0.0.1) (TCP)

;; WHEN: Fri Aug 29 18:09:26 -03 2025

;; XFR size: 22 records (messages 1, bytes 594)

Within the same zone record, identify the largest IP address allocated within the 127.0.0.1 IP range. Respond with the full IP address, eg 127.0.0.1

$ dig axfr @127.0.0.1 example.htd

; <<>> DiG 9.20.9-1-Debian <<>> axfr @127.0.0.1 example.htd

;; (1 server found)

;; global options: +cmd

example.htd. 604800 IN SOA example.htd. root.example.htd. 2 604800 86400 2419200 604800

example.htd. 604800 IN NS ns.example.htd.

example.htd. 604800 IN A 127.0.0.1

ftp.admin.example.htd. 604800 IN A 127.0.0.1

careers.example.htd. 604800 IN A 127.0.0.1

dc1.example.htd 604800 IN A 127.0.0.1

dc2.example.htd. 604800 IN A 127.0.0.1

internal.example.htd. 604800 IN A 127.0.0.1

admin.internal.example.htd. 604800 IN A 127.0.0.1

wsus.internal.example.htd. 604800 IN A 127.0.0.1

ir.example.htd. 604800 IN A 127.0.0.1

dev.ir.example.htd. 604800 IN A 127.0.0.1

ns.example.htd. 604800 IN A 127.0.0.1

resources.example.htd. 604800 IN A 127.0.0.1

securemessaging.example.htd. 604800 IN A 127.0.0.1

test1.example.htd. 604800 IN A 127.0.0.1

us.example.htd 604800 IN A 127.0.0.1

cluster1.us.example.htd. 604800 IN A [REDACTED]

messagcenter.us.example.htd. 604800 IN A 127.0.0.1

ww02.example.htd. 604800 IN A 127.0.0.1

www1.example.htd. 604800 IN A 127.0.0.1

example.htd. 604800 IN SOA example.htd. root.example.htd. 2 604800 86400 2419200 604800

;; Query time: 136 msec

;; SERVER: 127.0.0.1#53(127.0.0.1) (TCP)

;; WHEN: Fri Aug 29 18:09:26 -03 2025

;; XFR size: 22 records (messages 1, bytes 594)

Virtual Hosts

Once DNS directs traffic to the correct server, the web server configuration plays a key role in determining how incoming requests are handled. Popular web servers like Apache, Nginx, or IIS are capable of hosting multiple websites or applications on a single machine.

This is made possible through virtual hosting, which allows the server to differentiate between domains, subdomains, or entirely separate websites, each serving distinct content. Understanding virtual hosting is crucial for penetration testers, as it can reveal hidden applications or subdomains that might otherwise go unnoticed.

Brute-force vhosts on the target system. What is the full subdomain that is prefixed with “web”? Answer using the full domain, e.g. “x.example.htd”

Add the IP and the DNS to the /etc/hosts file.

GNU nano 8.4 /etc/hosts

127.0.0.1 localhost

127.0.1.1 kali.suricato kali

127.0.0.1 example.htd

# The following lines are desirable for IPv6 capable hosts

::1 localhost ip6-localhost ip6-loopback

ff02::1 ip6-allnodes

ff02::2 ip6-allrouters

Based on what we have seen about VHosts and Gobuster, the correct procedure would be:

$ gobuster vhost -u http://example.htd:47372 -w /usr/share/seclists/Discovery/DNS/subdomains-top1million-110000.txt --append-domain -t 50

Gobuster v3.6

by OJ Reeves (@TheColonial) & Christian Mehlmauer (@firefart)

[+] Url: http://example.htd:47372

[+] Method: GET

[+] Threads: 50

[+] Wordlist: /usr/share/seclists/Discovery/DNS/subdomains-top1million-110000.txt

[+] User Agent: gobuster/3.6

[+] Timeout: 10s

[+] Append Domain: true

===============================================================

Starting gobuster in VHOST enumeration mode

===============================================================

Found: blog.example.htd:47372 Status: 200 [Size: 98]

Found: support.example.htd:47372 Status: 200 [Size: 104]

Found: forum.example.htd:47372 Status: 200 [Size: 100]

Found: dev.example.htd:47372 Status: 200 [Size: 98]

Found: www.example.htd:47372 Status: 200 [Size: 102]

Found: [REDACTED]:47372 Status: 200 [Size: 186]

===============================================================

Brute-force vhosts on the target system. What is the full subdomain that is prefixed with “vm”? Answer using the full domain, e.g. “x.example.htd”

$ gobuster vhost -u http://example.htd:47372 -w /usr/share/seclists/Discovery/DNS/subdomains-top1million-110000.txt --append-domain -t 50

Gobuster v3.6

by OJ Reeves (@TheColonial) & Christian Mehlmauer (@firefart)

[+] Url: http://example.htd:47372

[+] Method: GET

[+] Threads: 50

[+] Wordlist: /usr/share/seclists/Discovery/DNS/subdomains-top1million-110000.txt

[+] User Agent: gobuster/3.6

[+] Timeout: 10s

[+] Append Domain: true

===============================================================

Starting gobuster in VHOST enumeration mode

===============================================================

Found: blog.example.htd:47372 Status: 200 [Size: 98]

Found: support.example.htd:47372 Status: 200 [Size: 104]

Found: admin.example.htd:47372 Status: 200 [Size: 100]

Found: forum.example.htd:47372 Status: 200 [Size: 100]

Found: [REDACTED]:47372 Status: 200 [Size: 98]

Found: www.example.htd:47372 Status: 200 [Size: 102]

Found: browse.example.htd:47372 Status: 200 [Size: 102]

Found: web171.example.htd:47372 Status: 200 [Size: 106]

Brute-force vhosts on the target system. What is the full subdomain that is prefixed with “br”? Answer using the full domain, e.g. “x.example.htd”

$ gobuster vhost -u http://example.htd:47372 -w /usr/share/seclists/Discovery/DNS/subdomains-top1million-110000.txt --append-domain -t 50

Gobuster v3.6

by OJ Reeves (@TheColonial) & Christian Mehlmauer (@firefart)

[+] Url: http://example.htd:47372

[+] Method: GET

[+] Threads: 50

[+] Wordlist: /usr/share/seclists/Discovery/DNS/subdomains-top1million-110000.txt

[+] User Agent: gobuster/3.6

[+] Timeout: 10s

[+] Append Domain: true

===============================================================

Starting gobuster in VHOST enumeration mode

===============================================================

Found: blog.example.htd:47372 Status: 200 [Size: 98]

Found: support.example.htd:47372 Status: 200 [Size: 104]

Found: admin.example.htd:47372 Status: 200 [Size: 100]

Found: forum.example.htd:47372 Status: 200 [Size: 100]

Found: dev.example.htd:47372 Status: 200 [Size: 98]

Found: www.example.htd:47372 Status: 200 [Size: 102]

Found: [REDACTED]:47372 Status: 200 [Size: 102]

Found: web1711.example.htd:47372 Status: 200 [Size: 106]

Brute-force vhosts on the target system. What is the full subdomain that is prefixed with “a”? Answer using the full domain, e.g. “x.example.htd”

$ gobuster vhost -u http://example.htd:47372 -w /usr/share/seclists/Discovery/DNS/subdomains-top1million-110000.txt --append-domain -t 50

Gobuster v3.6

by OJ Reeves (@TheColonial) & Christian Mehlmauer (@firefart)

[+] Url: http://example.htd:47372

[+] Method: GET

[+] Threads: 50

[+] Wordlist: /usr/share/seclists/Discovery/DNS/subdomains-top1million-110000.txt

[+] User Agent: gobuster/3.6

[+] Timeout: 10s

[+] Append Domain: true

===============================================================

Starting gobuster in VHOST enumeration mode

===============================================================

Found: blog.example.htd:47372 Status: 200 [Size: 98]

Found: support.example.htd:47372 Status: 200 [Size: 104]

Found: [REDACTED]:47372 Status: 200 [Size: 100]

Found: vms.example.htd:47372 Status: 200 [Size: 98]

Found: browse.example.htd:47372 Status: 200 [Size: 102]

Found: web1711.example.htd:47372 Status: 200 [Size: 106]

[ERR] Get "http://example.htd:47372/": context deadline exceeded (Client.Timeout exceeded while awaiting headers)

[ERR] Get "http://example.htd:47372/": context deadline exceeded (Client.Timeout exceeded while awaiting headers)

[ERR] Get "http://example.htd:47372/": context deadline exceeded (Client.Timeout exceeded while awaiting headers)

[ERR] Get "http://example.htd:47372/": context deadline exceeded (Client.Timeout exceeded while awaiting headers)

[ERR] Get "http://example.htd:47372/": context deadline exceeded (Client.Timeout exceeded while awaiting headers)

[ERR] Get "http://example.htd:47372/": context deadline exceeded (Client.Timeout exceeded while awaiting headers)

Brute-force vhosts on the target system. What is the full subdomain that is prefixed with “su”? Answer using the full domain, e.g. “x.example.htd”

$ gobuster vhost -u http://example.htd:47372 -w /usr/share/seclists/Discovery/DNS/subdomains-top1million-110000.txt --append-domain -t 50

Gobuster v3.6

by OJ Reeves (@TheColonial) & Christian Mehauer (@firefart)

[+] Url: http://example.htd:47372

[+] Method: GET

[+] Threads: 50

[+] Wordlist: /usr/share/seclists/Discovery/DNS/subdomains-top1million-110000.txt

[+] User Agent: gobuster/3.6

[+] Timeout: 10s

[+] Append Domain: true

===============================================================

Starting gobuster in VHOST enumeration mode

===============================================================

Found: blog.example.htd:47372 Status: 200 [Size: 98]

Found: [REDACTED]:47372 Status: 200 [Size: 104]

Found: forum.example.htd:47372 Status: 200 [Size: 100]

Found: vms.example.htd:47372 Status: 200 [Size: 98]

Found: browse.example.htd:47372 Status: 200 [Size: 102]

Found: web1711.example.htd:47372 Status: 200 [Size: 106]

Fingerprinting

Fingerprinting is the process of extracting technical details about the technologies that power a website or web application. Just like a human fingerprint uniquely identifies a person, the digital signatures of web servers, operating systems, and software components can reveal valuable insights into a target’s infrastructure and potential security weaknesses.

This information allows penetration testers (and attackers) to tailor their approach and focus on vulnerabilities specific to the identified technologies.

Why Fingerprinting is Essential in Web Reconnaissance

- Targeted Attacks: Knowing the exact technologies in use enables attackers to focus on exploits and vulnerabilities that are relevant, increasing the likelihood of a successful compromise.

- Identifying Misconfigurations: Fingerprinting can uncover outdated software, default settings, or misconfigurations that might not be obvious through other methods.

- Prioritizing Targets: When multiple systems are in scope, fingerprinting helps determine which targets are more likely to be vulnerable or contain valuable information.

- Building a Comprehensive Profile: Combining fingerprinting results with other reconnaissance data creates a holistic view of the target’s infrastructure, helping testers understand the overall security posture and potential attack vectors.

By mastering fingerprinting, you can turn seemingly small technical details into actionable intelligence for penetration testing.

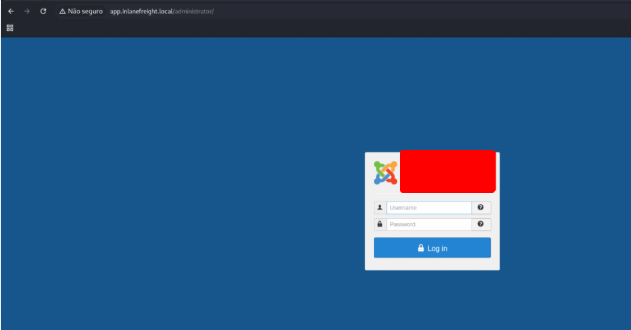

Determine the Apache version running on app.example.local on the target system. (Format: 0.0.0)

Insert both dns into the file /etc/hosts

GNU nano 8.4 /etc/hosts *

127.0.0.1 localhost

127.0.0.1 app.example.local

127.0.0.1 dev.example.local

# The following lines are desirable for IPv6 capable hosts

::1 localhost ip6-localhost ip6-loopback

ff02::1 ip6-allnodes

ff02::2 ip6-allrouters

In the example of example.com, you used curl -I to get the server banner:

$ curl -I http://app.example.local

HTTP/1.1 200 OK

Date: Fri, 29 Aug 2025 22:04:46 GMT

Server: Apache/[REDACTED] (Ubuntu)

Set-Cookie: 7z4or202k1272e581a49f5c56de40=grj1ljhhk1cm9d14scnfrc7koc; path=/; HttpOnly

Permissions-Policy: interest-cohort=()

Expires: Wed, 17 Aug 2005 00:00:00 GMT

Last-Modified: Fri, 29 Aug 2025 22:04:53 GMT

Cache-Control: no-store, no-cache, must-revalidate, post-check=0, pre-check=0

Pragma: no-cache

Content-Type: text/html; charset=utf-8

Which CMS is used on app.example.local on the target system? Respond with the name only, e.g., WordPress.

Open the address in the broswer and find the flag.

On which operating system is the dev.example.local webserver running in the target system? Respond with the name only, e.g., Debian.

$ curl -I http://app.example.local

HTTP/1.1 200 OK

Date: Fri, 29 Aug 2025 22:04:46 GMT

Server: Apache/2.4.41 ([REDACTED])

Set-Cookie: 72af8f2b2e1272e581a49f5c56de40=grj1ljhhk1cm9di4scnfrc7koc; path=/; HttpOnly

Permissions-Policy: interest-cohort=()

Expires: Wed, 17 Aug 2005 00:00:00 GMT

Last-Modified: Fri, 29 Aug 2025 22:04:53 GMT

Cache-Control: no-store, no-cache, must-revalidate, post-check=0, pre-check=0

Pragma: no-cache

Content-Type: text/html; charset=utf-8

Creepy Crawlies

Web crawling can be a vast and complex task, but you don’t have to tackle it manually. A wide range of web crawling tools exists to help you navigate and map websites efficiently.

These tools automate the crawling process, saving time and effort while allowing you to focus on analyzing the data they collect. From discovering hidden pages to mapping site structure and links, web crawlers are an essential part of effective web reconnaissance.

After spidering example.com, identify the location where future reports will be stored. Respond with the full domain, e.g., files.example.com.

Download the python script

wget -O ReconSpider.zip https://academy.hackthebox.com/storage/modules/144/ReconSpider.v1.2.zip

Unzip the file downloaded

unzip ReconSpider.zip

Execute the script with the domain informed

python3 ReconSpider.py http://example.com

┌──(alex㉿htd)-[~]

└─$ python3 ReconSpider.py http://example.com

2025-08-29 19:21:06 [scrapy.utils.log] INFO: Scrapy 2.12.0 started (bot: scrapybot)

2025-08-29 19:21:06 [scrapy.utils.log] INFO: Versions: lxml 5.4.0.0, libxml2 2.9.14, cssselect 1.3.0, parsel 1.10.0, w3lib 2.3.1, Twisted 24.11.0, Python 3.12.3 (main, Apr 8 2025, 12:45:00) [GCC 13.2.0], cryptography 43.0.0, Platform Linux-6.12.25-amd64-x86_64-with-glibc2.41

2025-08-29 19:21:06 [scrapy.addons] INFO: Enabled addons:

[]

2025-08-29 19:21:06 [scrapy.extensions.telnet] INFO: Telnet Password: dd524f4f3159ee

2025-08-29 19:21:06 [scrapy.middleware] INFO: Enabled extensions:

['scrapy.extensions.corestats.CoreStats',

'scrapy.extensions.telnet.TelnetConsole',

'scrapy.extensions.memusage.MemoryUsage',

'scrapy.extensions.logstats.LogStats']

2025-08-29 19:21:06 [scrapy.crawler] INFO: Overridden settings:

{'LOG_LEVEL': 'INFO'}

2025-08-29 19:21:06 [scrapy.middleware] INFO: Enabled downloader middlewares:

['scrapy.downloadermiddlewares.offsite.OffsiteMiddleware',

'scrapy.downloadermiddlewares.httpauth.HttpAuthMiddleware',

'scrapy.downloadermiddlewares.downloadtimeout.DownloadTimeoutMiddleware',

'scrapy.downloadermiddlewares.defaultheaders.DefaultHeadersMiddleware',

'scrapy.downloadermiddlewares.useragent.UserAgentMiddleware',

'main.CustomOffsiteMiddleware']

The script create a file called results.json. Search the address into this file.

"js_files": [

"https://www.example.com/wp-content/themes/bem_theme/js/owl.carousel.min.js?ver=5.6.15",

"https://www.example.com/wp-includes/js/jquery/jquery.js?ver=3.6.1",

"https://www.example.com/wp-content/themes/bem_theme/js/bootstrap.min.js?ver=5.6.15",

"https://www.example.com/wp-content/themes/bem_theme/js/main.js?ver=5.6.15",

"https://www.example.com/wp-content/themes/bem_theme/js/jquery.sticky.js?ver=5.6.15",

"https://www.example.com/wp-content/themes/bem_theme/js/jquery.smartmenus.js?ver=5.6.15",

"https://www.example.com/wp-content/themes/bem_theme/js/jquery.smartmenus.bootstrap.js?ver=5.6.15"

],

"form_fields": [],

"images": [

"https://www.example.com/wp-content/uploads/2013/03/AboutUs_01-1024x511.png",

"https://www.example.com/wp-content/uploads/2013/03/AboutUs_02-1024x511.png",

"https://www.example.com/wp-content/uploads/2013/03/AboutUs_03-1024x511.png",

"https://www.example.com/wp-content/uploads/2013/03/AboutUs_04-1024x511.png",

"https://www.example.com/wp-content/uploads/2013/03/AboutUs_05-1024x511.png",

"https://www.example.com/wp-content/uploads/2013/03/AboutUs_06-1024x511.png",

"https://www.example.com/wp-content/uploads/2013/03/Offices_01-1024x539.png"

],

"videos": [],

"audios": [],

"comments": [

"--------------------------------------------",

"---------- transporteX-FOOTER AREA ----------",

"--------------------------------------------",

"change Jeremy's email to jeremy-cobian@example.com",

"--------------------------------------------",

"---------- TO-DO: change the location of future reports [REDACTED]"

]

Web Archives

In today’s fast-moving digital landscape, websites often appear and disappear, leaving behind only fleeting traces of their presence. Luckily, the Internet Archive’s Wayback Machine offers a window into the past, allowing us to explore historical snapshots of websites as they once existed.

For penetration testers and researchers, this tool is invaluable for uncovering old pages, hidden directories, and forgotten content that might reveal insights about a target’s infrastructure or past vulnerabilities.

How many Pen Testing Labs did HackTheBox have on the 8th August 2018? Answer with an integer, eg 1234.

To verify the number of Pen Testing Labs Hack The Box had on August 8, 2018, you can use the Wayback Machine to view archived versions of their website from that date. Here’s how:

- Visit the Wayback Machine.

- Enter the URL: https://www.hackthebox.eu/ into the search bar.

- Select the calendar date closest to August 8, 2018.

- Navigate to the section listing the Pen Testing Labs to find the number available at that time.

- You’ll find something arround 70 and 75 😀

[Redacted]

How many members did HackTheBox have on the 10th June 2017? Answer with an integer, eg 1234.

On 10th June 2017, Hack The Box (HTD) had 3,054 members.

This number reflects the early stage of the platform, shortly after its launch, before it grew into the much larger community it has today. You can verify this information by checking archived versions of the HTD website from that period using the Wayback Machine, entering the HTD URL, and selecting the snapshot from 10th June 2017 to see the member count at that time.

[Redacted]

Going back to March 2002, what website did the facebook.com domain redirect to? Answer with the full domain, eg http://www.facebook.com/

In March 2002, the domain facebook.com redirected to http://site.aboutface.com/. This was a placeholder page for a project called “The Facebook,” which was a directory of Harvard students. The domain was registered on March 29, 1997, and the site went live in February 2004. Course HeroMedium

You can verify this by accessing the Wayback Machine and viewing the snapshot from March 30, 2002:

http://web.archive.org/web/20020330205744/http://www.facebook.com/

This snapshot shows the domain redirecting to http://site.aboutface.com/.

[Redacted]

According to the paypal.com website in October 1999, what could you use to “beam money to anyone”? Answer with the product name, eg My Device, remove the ™ from your answer.

In October 1999, according to the PayPal website, you could use the Palm Organizer to “beam money to anyone.”

This referred to PayPal’s early mobile payment system, which allowed users to transfer funds between Palm devices using infrared (IR) technology. It was one of the first attempts at peer-to-peer mobile payments, enabling users to “beam” money directly from one device to another.

[Redacted]

Going back to November 1998 on google.com, what address hosted the non-alpha “Google Search Engine Prototype” of Google? Answer with the full address, eg http://google.com

In November 1998, the non-alpha “Google Search Engine Prototype” was hosted at:

This early version of Google was developed by Larry Page and Sergey Brin as part of their research project at Stanford University. It utilized the PageRank algorithm to rank web pages based on their link structures, marking a significant advancement in search engine technology at the time. The prototype was accessible through Stanford’s domain, reflecting its academic origins. WIRED+5WIRED+5Wikipedia+5

You can view an archived version of this early Google prototype using the Wayback Machine. Simply enter the URL http://google.stanford.edu/ and select a snapshot from November 1998 to experience the search engine as it appeared during that period.

Going back to March 2000 on www.iana.org, when exacty was the site last updated? Answer with the date in the footer, eg 11-March-99

Going back to March 2000 on www.iana.org, the site’s footer indicated that it was last updated on 17-December-99.

This date reflects the most recent update to the website at that time. You can verify this by accessing archived versions of the IANA website from March 2000 using the Wayback Machine. By entering http://www.iana.org/ and selecting a snapshot from that period, you can view the site as it appeared and confirm the footer date.

[Redacted]

According to wikipedia.com snapshot taken on February 9, 2003, how many articles were they already working on in the English version? Answer with the number they state without any commas, e.g., 100000, not 100,000.

According to the Wikipedia snapshot taken on February 9, 2003, the English version of Wikipedia was already working on 3000 articles.

This number reflects the early growth of Wikipedia in its initial years, showing that even by 2003, the platform had begun to accumulate a substantial number of articles. You can verify this information by accessing the Wayback Machine, entering https://www.wikipedia.com/, and selecting the snapshot from February 9, 2003 to see the original page.

[Redacted]

Skills Assessment

To complete this module’s skills assessment, you’ll need to apply the techniques you’ve learned, including:

- Using

whoisto gather domain registration details - Analyzing

robots.txtfiles to identify restricted or interesting paths - Performing subdomain brute-forcing to uncover hidden assets

- Crawling websites and analyzing results to map out structure and content

Demonstrate your proficiency by effectively combining these methods. As you discover subdomains, don’t forget to add them to your /etc/hosts file to streamline testing and further exploration.

This assessment is designed to test your ability to apply web reconnaissance skills in a practical, real-world scenario, reinforcing both your technical knowledge and analytical thinking.

What is the IANA ID of the registrar of the example.com domain?

Here’s the step-by-step process I would use (and what tools you can use too):

- WHOIS Query:

- You can run a command like:

whois example.comor use an online WHOIS service (like whois.domaintools.com or vedbex.com).

- You can run a command like:

- Locate the Registrar Info:

- In the WHOIS output, look for the

RegistrarorSponsoring Registrarsection. - This section includes the IANA ID, which is a unique numeric identifier assigned to each ICANN-accredited registrar.

- In the WHOIS output, look for the

- Identify the IANA ID:

- For

example.com, the WHOIS information shows IANA ID 468, which corresponds to the registrar managing the domain registration.

- For

This method is standard in web reconnaissance and OSINT to verify domain ownership, registrar details, and sometimes contact information for further testing.

$ whois example.com

Domain Name: EXAMPLE.COM

Registry Domain ID: 2420436757_DOMAIN_COM-VRSN

Registrar WHOIS Server: whois.registrar.amazon

Registrar URL: http://registrar.amazon.com

Updated Date: 2025-07-01T22:45:43Z

Creation Date: 2019-08-05T22:43:09Z

Registry Expiry Date: 2026-08-05T22:43:09Z

Registrar: Amazon Registrar, Inc.

Registrar IANA ID: [REDACTED]

Registrar Abuse Contact Email: trustandsafety@support.aws.com

Registrar Abuse Contact Phone: +1.2024422253

Domain Status: clientDeleteProhibited https://icann.org/epp#clientDeleteProhibited

Domain Status: clientTransferProhibited https://icann.org/epp#clientTransferProhibited

Domain Status: clientUpdateProhibited https://icann.org/epp#clientUpdateProhibited

Name Server: NS-1303.AWSDNS-34.ORG

Name Server: NS-1580.AWSDNS-05.CO.UK

Name Server: NS-161.AWSDNS-20.COM

Name Server: NS-671.AWSDNS-19.NET

DNSSEC: unsigned

What http server software is powering the example.htd site on the target system? Respond with the name of the software, not the version, e.g., Apache.

Put the ip and the example.htd in the /etc/hosts file

GNU nano 8.4 /etc/hosts *

127.0.0.1 localhost

127.0.1.1 kali.suricato kali

127.0.0.1 example.htd

# The following lines are desirable for IPv6 capable hosts

::1 localhost ip6-localhost ip6-loopback

ff02::1 ip6-allnodes

ff02::2 ip6-allrouters

$ curl -I http://example.htd:44038

HTTP/1.1 200 OK

Server: [REDACTED]

Date: Fri, 29 Aug 2025 23:05:55 GMT

Content-Type: text/html

Content-Length: 120

Last-Modified: Thu, 01 Aug 2024 09:35:23 GMT

Connection: keep-alive

ETag: "66ab56db-78"

Accept-Ranges: bytes

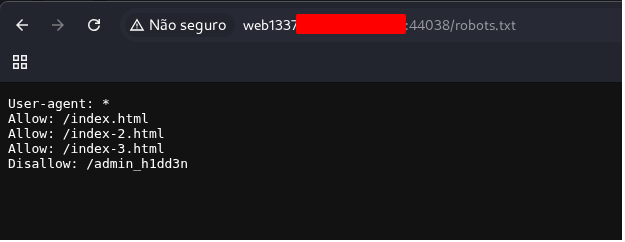

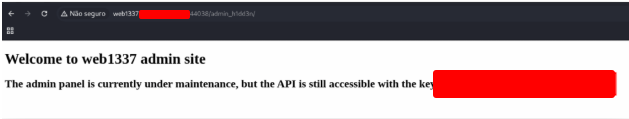

What is the API key in the hidden admin directory that you have discovered on the target system?

It seems like even with the big.txt wordlist, Gobuster didn’t find any additional directories besides /index.html. This usually means one of two things:

- The hidden admin directory only responds when the correct vHost is set.

- Many labs (like HTD) configure sites to only serve certain paths when the correct virtual host is used.

- Your target IP is

127.0.0.1:44038and the vHost isexample.htd.

- The directory has an unusual name not present in standard wordlists.

Next Step: Use the vHost header

You should rerun Gobuster while specifying the correct Host header:

$ gobuster vhost -u http://example.htd:44038 -w /usr/share/seclists/Discovery/DNS/subdomains-top1million-110000.txt --append-domain -t 50

Gobuster v3.6

by OJ Reeves (@TheColonial) & Christian Mehlmauer (@firefart)

[+] Url: http://example.htd:44038

[+] Method: GET

[+] Threads: 50

[+] Wordlist: /usr/share/seclists/Discovery/DNS/subdomains-top1million-110000.txt

[+] User Agent: gobuster/3.6

[+] Timeout: 10s

[+] Append Domain: true

===============================================================

Starting gobuster in VHOST enumeration mode

===============================================================

Progress: 80052 / 114443 (69.95%) [ERROR] Get "http://example.htd:44038/": context deadline exceeded (Client.Timeout exceeded while awaiting headers)

Progress: 104540 / 114443 (91.35%) [ERROR] Get "http://example.htd:44038/": context deadline exceeded (Client.Timeout exceeded while awaiting headers)

Progress: 105112 / 114443 (91.85%) [ERROR] Get "http://example.htd:44038/": context deadline exceeded (Client.Timeout exceeded while awaiting headers)

Progress: 107939 / 114443 (93.84%) [ERROR] Get "http://example.htd:44038/": context deadline exceeded (Client.Timeout exceeded while awaiting headers)

Found: web1337.example.htd:44038 Status: 200 [Size: 104]

Progress: 108880 / 114443 (95.14%) [ERROR] Get "http://example.htd:44038/": context deadline exceeded (Client.Timeout exceeded while awaiting headers)

Progress: 110768 / 114443 (96.79%)^C

Now that it’s registered, we’ll search for subdirectories along this path — this is where FFUF really shows its strength.

GNU nano 8.4 /etc/hosts *

127.0.0.1 localhost

127.0.1.1 kali.suricato kali

127.0.0.1 example.htd

127.0.0.1 web1337.example.htd

# The following lines are desirable for IPv6 capable hosts

::1 localhost ip6-localhost ip6-loopback

ff02::1 ip6-allnodes

ff02::2 ip6-allrouters

From our reconnaissance, the subdirectory that catches our attention is “robots”. If you’re unfamiliar with it, here’s a quick overview:

The robots.txt file is placed on websites to tell web crawlers and search engine bots which directories or pages they are allowed—or not allowed—to access.

Does it actually provide real security? Not really. In fact, it often highlights hidden or sensitive paths that the site owner prefers to keep private. For penetration testers, this can be a goldmine of information.

We can inspect it by visiting:

http://web1337.example.htd:<PORT>/robots.txt

Once there, you’ll notice an important detail that could guide our next steps in reconnaissance and exploitation.

Check each of the addresses to find the flag.

After crawling the example.htd domain on the target system, what is the email address you have found? Respond with the full email, e.g., mail@example.htd.

Time to go after the four “cubes” this challenge has to offer — the rewards are definitely worth it!

Our first step is to target the vhost:

web1337.example.htd

Using Gobuster

Why Gobuster? Because it’s perfect for directory and file brute-forcing, helping us uncover hidden paths that aren’t immediately visible on the website. Don’t worry about testing every possible directory — we’ve already done the heavy lifting for you.

You’ll also be glad to know that the wordlist for fuzzing is already at your disposal, reused from previous challenges. With it, Gobuster can systematically test potential directories and reveal the hidden treasures the site is guarding.

$ gobuster vhost -u http://web1337.example.htd:44038 -w /usr/share/seclists/Discovery/DNS/subdomains-top1million-110000.txt --append-domain -t 50

Gobuster v3.6

by OJ Reeves (@TheColonial) & Christian Mehlmauer (@firefart)

[+] Url: http://web1337.example.htd:44038

[+] Method: GET

[+] Threads: 50

[+] Wordlist: /usr/share/seclists/Discovery/DNS/subdomains-top1million-110000.txt

[+] User Agent: gobuster/3.6

[+] Timeout: 10s

[+] Append Domain: true

===============================================================

Starting gobuster in VHOST enumeration mode

===============================================================

Found: dev.web1337.example.htd:44038 Status: 200 [Size: 123]

From this point on, tools like Scrapy and ReconSpider require installation, so I rely on Python virtual environments to manage them. If you’re unfamiliar, I’ve provided a step-by-step guide on how to set one up [here].

Why bother with a virtual environment? It’s considered best practice because it keeps your project dependencies isolated from the global Python environment. This prevents version conflicts, keeps your system tidy, and makes managing multiple projects or tools much simpler.

For the rest of this tutorial, I’ll assume you’re already inside your virtual environment, ready to install and run the reconnaissance tools we need.

$ python3 ReconSpider.py http://dev.web1337.example.htd:44038

2025-08-29 20:40:22 [scrapy.utils.log] INFO: Scrapy 2.12.0 started (bot: scrapybot)

2025-08-29 20:40:22 [scrapy.utils.log] INFO: Versions: lxml 5.4.0.0, libxml2 2.9.14, cssselect 1.3.0, parsel 1.10.0, w3lib 2.3.1, Twisted 24.11.0, Python 3.12.3 (main, Apr 8 2025), cryptography 43.0.0, Platform Linux-6.12.25-amd64-x86_64-with-glibc2.41

2025-08-29 20:40:22 [scrapy.addons] INFO: Enabled addons:

[]

2025-08-29 20:40:22 [scrapy.extensions.telnet] INFO: Telnet Password: 030f8cc1f8cad5fc

2025-08-29 20:40:22 [scrapy.middleware] INFO: Enabled extensions:

['scrapy.extensions.corestats.CoreStats',

'scrapy.extensions.telnet.TelnetConsole',

'scrapy.extensions.memusage.MemoryUsage',

'scrapy.extensions.logstats.LogStats']

2025-08-29 20:40:22 [scrapy.crawler] INFO: Overridden settings:

{'LOG_LEVEL': 'INFO'}

2025-08-29 20:40:22 [scrapy.middleware] INFO: Enabled downloader middlewares:

['scrapy.downloadermiddlewares.offsite.OffsiteMiddleware',

'scrapy.downloadermiddlewares.httpauth.HttpAuthMiddleware',

'scrapy.downloadermiddlewares.downloadtimeout.DownloadTimeoutMiddleware',

'scrapy.downloadermiddlewares.defaultheaders.DefaultHeadersMiddleware',

'scrapy.downloadermiddlewares.useragent.UserAgentMiddleware',

'main.CustomOffsiteMiddleware',

'scrapy.downloadermiddlewares.retry.RetryMiddleware',

'scrapy.downloadermiddlewares.redirect.MetaRefreshMiddleware',

'scrapy.downloadermiddlewares.httpcompression.HttpCompressionMiddleware',

'scrapy.downloadermiddlewares.redirect.RedirectMiddleware',

'scrapy.downloadermiddlewares.cookies.CookiesMiddleware']

python3 ReconSpider.py http://dev.web1337.example.htd:44038

After running the scan, the results are saved into a text file. Let’s take a look and see what we uncovered — completing not just this question, but the next one too!

You can review the results with a simple command:

cat results.json

This will display all the findings in your terminal, giving you a clear view of the discovered paths, directories, or endpoints. From here, you can analyze the data and plan your next steps in the reconnaissance process.

$ cat results.json

{

"emails": [

"[REDACTED]"

],

"links": [

"http://dev.web1337.example.htd:44038/index-114.html",

"http://dev.web1337.example.htd:44038/index-326.html",

"http://dev.web1337.example.htd:44038/index-403.html",

"http://dev.web1337.example.htd:44038/index-189.html",

"http://dev.web1337.example.htd:44038/index-77.html",

"http://dev.web1337.example.htd:44038/index-553.html"

]

}

What is the API key the example.htd developers will be changing too?

For this result, all you need to do is scroll to the end of the JSON document we just opened — that’s where the important details are waiting!

This is usually where the most interesting discoveries, like hidden directories or endpoints, are recorded, giving you quick insight into what the scan revealed.

],

"external_files": [],

"js_files": [],

"form_fields": [],

"images": [],

"videos": [],

"audio": [],

"comments": [

"Remember to change the API key to [REDACTED] "

]

}