1. Introduction: Why Docker Exists

In the history of software development, few tools have shifted the paradigm as drastically as Docker. Before Docker, the most common phrase heard in development offices was: “But it works on my machine!”

This problem arose because environments were inconsistent. A developer might write code on a Mac with Python 3.9 installed, send it to a QA tester using Windows with Python 3.8, and finally deploy it to a Linux server running Python 3.6. Incompatibilities were inevitable.

Docker solves this by allowing developers to package the application and its environment (dependencies, libraries, settings, and OS tools) into a single, lightweight unit called a Container. If it runs in a container on your laptop, it is guaranteed to run in that same container on a server, a cloud cluster, or your colleague’s machine.

This guide will take you from zero knowledge to full proficiency in installing, running, and managing Docker containers.

2. Core Concepts: The “Recipe vs. Cake” Analogy

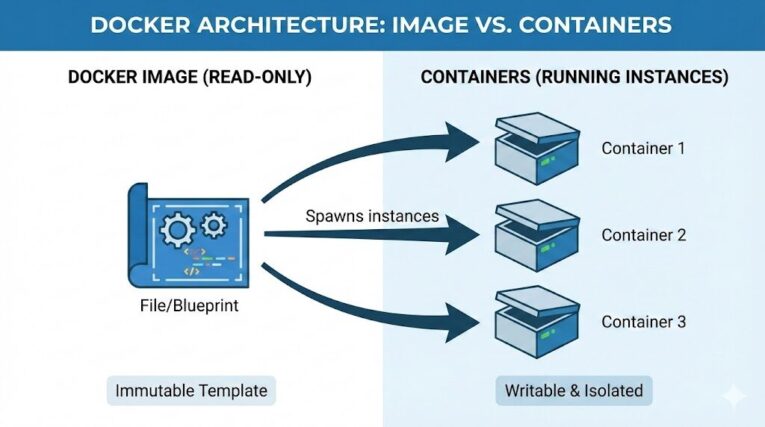

To use Docker effectively, you must understand the difference between its two primary components: Images and Containers.

2.1 The Docker Image (The Recipe)

An Image is a read-only template. It contains the source code, libraries, dependencies, tools, and other files needed for an application to run. You cannot “run” an image directly; it is just a file stored on your disk.

- Analogy: Think of the Image as a Recipe in a cookbook. You can read it, share it, and copy it, but you cannot eat it.

2.2 The Docker Container (The Cake)

A Container is the running instance of an image. It is a lightweight, standalone, executable package of software. You can start, stop, move, and delete a container.

- Analogy: Think of the Container as the Cake baked using the recipe. You can bake ten identical cakes (containers) from a single recipe (image).

2.3 Virtual Machines vs. Containers

This is a crucial distinction.

- Virtual Machines (VMs): Virtualize the hardware. They require a full Guest Operating System (like installing a whole Windows inside your MacOS). They are heavy (GBs in size) and slow to boot.

- Containers: Virtualize the Operating System. They share the host machine’s OS kernel but keep processes isolated. They are lightweight (MBs in size) and boot instantly.

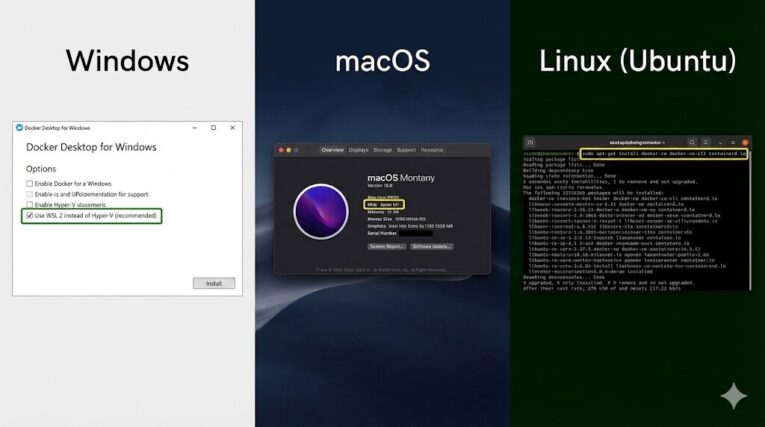

3. Step-by-Step Installation Guide

Installing Docker varies significantly depending on your hardware and Operating System. Please follow the section below that matches your computer.

3.1 Installing on Windows

For Windows, we use Docker Desktop. Modern Docker on Windows leverages WSL 2 (Windows Subsystem for Linux 2), which allows you to run Linux binaries natively on Windows without the performance penalty of a traditional VM.

- Prerequisites: Ensure you are running Windows 10 (version 2004 or higher) or Windows 11. Virtualization must be enabled in your BIOS.

- Download: Visit the Docker Hub website and download the “Docker Desktop for Windows” installer.

- Installation: Run the

.exefile.- Important: When prompted, ensure the option “Use WSL 2 instead of Hyper-V” is checked. This is critical for performance.

- WSL 2 Components: If your computer lacks the necessary Linux kernel update, Docker Desktop will pause and provide a link to Microsoft. Follow the link, install the kernel update, and continue.

- Reboot: Restart your computer.

- Verify: Open PowerShell and type

docker version. If you see output describing a Client and Server, you are successful.

3.2 Installing on macOS

Mac users also use Docker Desktop. However, you must choose the correct version for your processor.

- Check your Chip: Click the Apple Logo (top left) -> About This Mac.

- Intel: Choose “Mac with Intel chip”.

- Apple Silicon (M1/M2/M3): Choose “Mac with Apple chip”.

- Download and Install: Download the

.dmgfile. Drag the Docker icon into your Applications folder. - Permissions: On the first launch, Docker will ask for your system password (sudo access). It needs this to configure networking tools so containers can talk to the internet. Grant this access.

- Verify: Open your Terminal app and type

docker version.

3.3 Installing on Linux (Ubuntu/Debian)

Linux is the native environment for Docker, so it runs without the “Desktop” GUI layer (though one is available, the CLI is preferred). We will use the official repository.

- Clean Up: Remove old versions if they exist:Bash

sudo apt-get remove docker docker-engine docker.io containerd runc - Set up Repository: Install the necessary certificates:Bash

sudo apt-get update sudo apt-get install ca-certificates curl gnupg - Add GPG Key: Add Docker’s official security key:Bash

sudo install -m 0755 -d /etc/apt/keyrings curl -fsSL https://download.docker.com/linux/ubuntu/gpg | sudo gpg --dearmor -o /etc/apt/keyrings/docker.gpg sudo chmod a+r /etc/apt/keyrings/docker.gpg - Add Source: Add the repository to your Apt sources:Bash

echo \ "deb [arch="$(dpkg --print-architecture)" signed-by=/etc/apt/keyrings/docker.gpg] https://download.docker.com/linux/ubuntu \ "$(. /etc/os-release && echo "$VERSION_CODENAME")" stable" | \ sudo tee /etc/apt/sources.list.d/docker.list > /dev/null - Install:Bash

sudo apt-get update sudo apt-get install docker-ce docker-ce-cli containerd.io - Post-Install (Important): By default, you need

sudoto run Docker. To fix this, add your user to the docker group:Bashsudo usermod -aG docker $USERNote: You must log out and log back in for this to take effect.

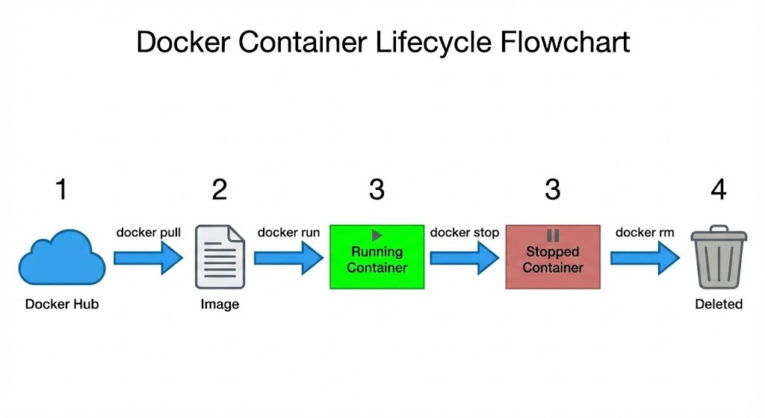

4. Obtaining Images: The docker pull Command

Now that Docker is installed, we need software to run. We get this from Docker Hub, the world’s largest library of container images.

To download an image without running it, we use docker pull. Let’s download Nginx (a popular web server).

docker pull nginx

Understanding Layers: When you run this command, you will see multiple progress bars. This is because Docker images are built in layers. If you download an image based on Linux Ubuntu, and later download another image also based on Ubuntu, Docker is smart enough to skip downloading the base layer again. It only downloads what is different. This caching mechanism makes Docker incredibly fast and storage-efficient.

To check what images you have locally:

docker images

You will see the repository name (nginx), the tag (latest), the Image ID, and the size.

5. Running Containers: The docker run Command

This is the most frequently used command. It takes a static image and turns it into a running process.

5.1 The Basic Run

If you run the following command:

docker run nginx

Your terminal will “hang.” The cursor will blink, and you will see server logs, but you can’t type commands. This is because the container is running in the foreground. To stop it, you have to hit Ctrl+C.

5.2 Detached Mode (-d)

In 99% of cases, we want the container to run in the background so we can keep using our terminal. We use the “detached” flag:

docker run -d nginx

Docker will print a long ID (the Container ID) and return you to the command prompt. The web server is now running silently in the background.

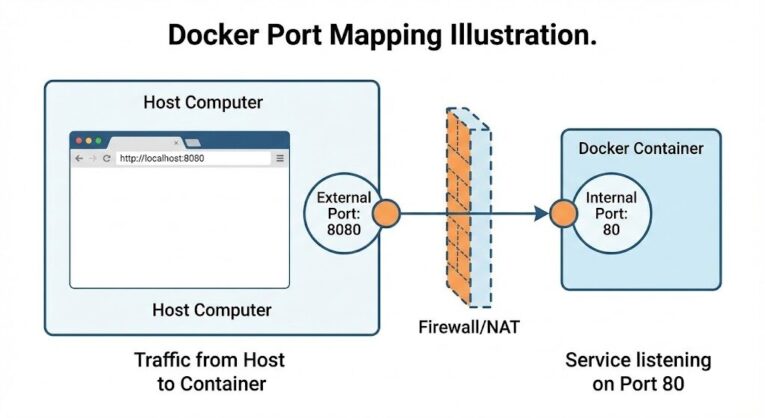

5.3 Port Mapping (-p)

This is the concept beginners struggle with most. Even though Nginx is running, you cannot access it in your browser yet. Why? Because the container is a fortress. It has its own internal network. Nginx is listening on port 80 inside that fortress, but the drawbridge is up.

We must map a port on your Host (your laptop) to the port on the Container.

- Syntax:

-p [Host_Port]:[Container_Port]

Let’s map port 8080 on our machine to port 80 inside the container:

docker run -d -p 8080:80 nginx

Now, open your web browser and navigate to http://localhost:8080. You will see the “Welcome to nginx!” message.

5.4 Naming Containers (--name)

If you don’t name your container, Docker generates a random name like distracted_darwin. To manage them easily, give them a specific name:

docker run -d -p 8080:80 --name my-web-server nginx

6. Managing and Monitoring

Once containers are running, you need to oversee them.

6.1 Listing Containers

To see what is currently running:

docker ps

This command is your dashboard. It shows the Container ID, the Image used, the status (Uptime), and the port mappings.

To see all containers (including those that crashed or were stopped):

docker ps -a

6.2 Viewing Logs

Since our container is running in the background (detached), we can’t see if errors are happening. To view the output of the container:

docker logs my-web-server

If you want to watch the logs live (like tail -f in Linux), add the follow flag:

docker logs -f my-web-server

6.3 Stopping and Starting

To gracefully shut down the container:

docker stop my-web-server

This sends a SIGTERM signal, allowing the application to save data and close connections before shutting down.

To start it up again:

docker start my-web-server

7. Cleaning Up: Deletion

Docker containers can take up disk space if you let them accumulate.

7.1 Removing Containers

You cannot remove a container that is currently running. You must stop it first.

docker stop my-web-server

docker rm my-web-server

Pro Tip: To force delete a running container (stops and deletes in one go):

docker rm -f my-web-server

7.2 Removing Images

Deleting the container does not delete the image (the recipe). To remove the Nginx image from your hard drive:

docker rmi nginx

7.3 The “Nuke” Option

If you have been experimenting and have dozens of stopped containers and unused networks, you can clean everything up with one powerful command. Warning: This deletes all stopped containers.

docker system prune

8. Advanced Topic: Data Persistence (Volumes)

By default, Docker containers are ephemeral. This means if you delete the container, any file you created inside it disappears. This is catastrophic for databases.

To save data, we use Volumes. A volume maps a folder on your real hard drive to a folder inside the container.

docker run -d -v /home/user/my-data:/var/lib/mysql mysql

In this example, even if the MySQL container is deleted, the data remains safely stored in /home/user/my-data on your laptop.

Conclusion

You have now crossed the threshold from Docker novice to capable user. You understand the architecture distinguishing VMs from Containers, you have successfully installed the engine on your specific OS, and you have mastered the lifecycle commands: pull, run, stop, and rm.

Your Next Step: Try to “Dockerize” a simple application. Create a small index.html file on your computer, and use the Nginx container with a Volume map to serve your own HTML file instead of the default Nginx welcome page. This will solidify your understanding of how containers interact with your local file system.

Thank you 🙂